mirror of

https://github.com/huggingface/lerobot.git

synced 2026-05-16 17:20:05 +00:00

docs(benchmarks): add benchmark integration guide and standardize benchmark docs

Add a comprehensive guide for adding new benchmarks to LeRobot, and refactor the existing LIBERO and Meta-World docs to follow the new standardized template. Made-with: Cursor

This commit is contained in:

+90

-81

@@ -1,36 +1,61 @@

|

||||

# LIBERO

|

||||

|

||||

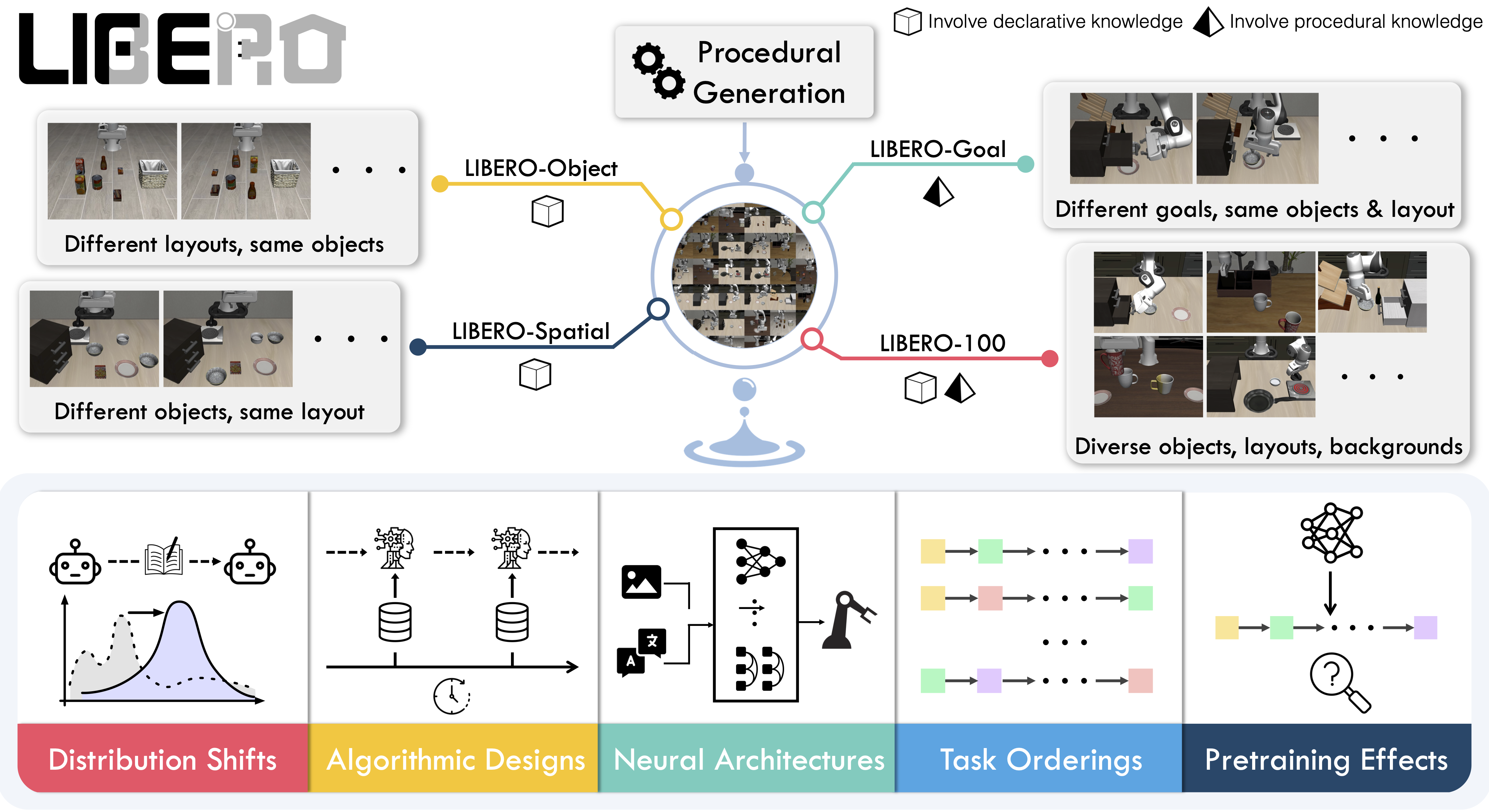

**LIBERO** is a benchmark designed to study **lifelong robot learning**. The idea is that robots won’t just be pretrained once in a factory, they’ll need to keep learning and adapting with their human users over time. This ongoing adaptation is called **lifelong learning in decision making (LLDM)**, and it’s a key step toward building robots that become truly personalized helpers.

|

||||

LIBERO is a benchmark designed to study **lifelong robot learning** — the idea that robots need to keep learning and adapting with their users over time, not just be pretrained once. It provides a set of standardized manipulation tasks that focus on **knowledge transfer**: how well a robot can apply what it has already learned to new situations. By evaluating on LIBERO, different algorithms can be compared fairly and researchers can build on each other's work.

|

||||

|

||||

- 📄 [LIBERO paper](https://arxiv.org/abs/2306.03310)

|

||||

- 💻 [Original LIBERO repo](https://github.com/Lifelong-Robot-Learning/LIBERO)

|

||||

|

||||

To make progress on this challenge, LIBERO provides a set of standardized tasks that focus on **knowledge transfer**: how well a robot can apply what it has already learned to new situations. By evaluating on LIBERO, different algorithms can be compared fairly and researchers can build on each other’s work.

|

||||

|

||||

LIBERO includes **five task suites**:

|

||||

|

||||

- **LIBERO-Spatial (`libero_spatial`)** – tasks that require reasoning about spatial relations.

|

||||

- **LIBERO-Object (`libero_object`)** – tasks centered on manipulating different objects.

|

||||

- **LIBERO-Goal (`libero_goal`)** – goal-conditioned tasks where the robot must adapt to changing targets.

|

||||

- **LIBERO-90 (`libero_90`)** – 90 short-horizon tasks from the LIBERO-100 collection.

|

||||

- **LIBERO-Long (`libero_10`)** – 10 long-horizon tasks from the LIBERO-100 collection.

|

||||

|

||||

Together, these suites cover **130 tasks**, ranging from simple object manipulations to complex multi-step scenarios. LIBERO is meant to grow over time, and to serve as a shared benchmark where the community can test and improve lifelong learning algorithms.

|

||||

- Paper: [Benchmarking Knowledge Transfer for Lifelong Robot Learning](https://arxiv.org/abs/2306.03310)

|

||||

- GitHub: [Lifelong-Robot-Learning/LIBERO](https://github.com/Lifelong-Robot-Learning/LIBERO)

|

||||

- Project website: [libero-project.github.io](https://libero-project.github.io)

|

||||

|

||||

|

||||

|

||||

## Evaluating with LIBERO

|

||||

## Available tasks

|

||||

|

||||

At **LeRobot**, we ported [LIBERO](https://github.com/Lifelong-Robot-Learning/LIBERO) into our framework and used it mainly to **evaluate [SmolVLA](https://huggingface.co/docs/lerobot/en/smolvla)**, our lightweight Vision-Language-Action model.

|

||||

LIBERO includes **five task suites** covering **130 tasks**, ranging from simple object manipulations to complex multi-step scenarios:

|

||||

|

||||

LIBERO is now part of our **multi-eval supported simulation**, meaning you can benchmark your policies either on a **single suite of tasks** or across **multiple suites at once** with just a flag.

|

||||

| Suite | CLI name | Tasks | Description |

|

||||

| -------------- | ---------------- | ----- | -------------------------------------------------- |

|

||||

| LIBERO-Spatial | `libero_spatial` | 10 | Tasks requiring reasoning about spatial relations |

|

||||

| LIBERO-Object | `libero_object` | 10 | Tasks centered on manipulating different objects |

|

||||

| LIBERO-Goal | `libero_goal` | 10 | Goal-conditioned tasks with changing targets |

|

||||

| LIBERO-90 | `libero_90` | 90 | Short-horizon tasks from the LIBERO-100 collection |

|

||||

| LIBERO-Long | `libero_10` | 10 | Long-horizon tasks from the LIBERO-100 collection |

|

||||

|

||||

To Install LIBERO, after following LeRobot official instructions, just do:

|

||||

`pip install -e ".[libero]"`

|

||||

## Installation

|

||||

|

||||

After following the LeRobot installation instructions:

|

||||

|

||||

```bash

|

||||

pip install -e ".[libero]"

|

||||

```

|

||||

|

||||

<Tip>

|

||||

LIBERO requires Linux (`sys_platform == 'linux'`). LeRobot uses MuJoCo for simulation — set the rendering backend before training or evaluation:

|

||||

|

||||

```bash

|

||||

export MUJOCO_GL=egl # for headless servers (HPC, cloud)

|

||||

```

|

||||

|

||||

</Tip>

|

||||

|

||||

## Evaluation

|

||||

|

||||

### Default evaluation (recommended)

|

||||

|

||||

Evaluate across the four standard suites (10 episodes per task):

|

||||

|

||||

```bash

|

||||

lerobot-eval \

|

||||

--policy.path="your-policy-id" \

|

||||

--env.type=libero \

|

||||

--env.task=libero_spatial,libero_object,libero_goal,libero_10 \

|

||||

--eval.batch_size=1 \

|

||||

--eval.n_episodes=10 \

|

||||

--env.max_parallel_tasks=1

|

||||

```

|

||||

|

||||

### Single-suite evaluation

|

||||

|

||||

Evaluate a policy on one LIBERO suite:

|

||||

Evaluate on one LIBERO suite:

|

||||

|

||||

```bash

|

||||

lerobot-eval \

|

||||

@@ -42,15 +67,13 @@ lerobot-eval \

|

||||

```

|

||||

|

||||

- `--env.task` picks the suite (`libero_object`, `libero_spatial`, etc.).

|

||||

- `--env.task_ids` picks task ids to run (`[0]`, `[1,2,3]`, etc.). Omit this flag (or set it to `null`) to run all tasks in the suite.

|

||||

- `--env.task_ids` restricts to specific task indices (`[0]`, `[1,2,3]`, etc.). Omit to run all tasks in the suite.

|

||||

- `--eval.batch_size` controls how many environments run in parallel.

|

||||

- `--eval.n_episodes` sets how many episodes to run in total.

|

||||

|

||||

---

|

||||

- `--eval.n_episodes` sets how many episodes to run per task.

|

||||

|

||||

### Multi-suite evaluation

|

||||

|

||||

Benchmark a policy across multiple suites at once:

|

||||

Benchmark a policy across multiple suites at once by passing a comma-separated list:

|

||||

|

||||

```bash

|

||||

lerobot-eval \

|

||||

@@ -61,50 +84,49 @@ lerobot-eval \

|

||||

--eval.n_episodes=2

|

||||

```

|

||||

|

||||

- Pass a comma-separated list to `--env.task` for multi-suite evaluation.

|

||||

### Control mode

|

||||

|

||||

### Control Mode

|

||||

LIBERO supports two control modes — `relative` (default) and `absolute`. Different VLA checkpoints are trained with different action parameterizations, so make sure the mode matches your policy:

|

||||

|

||||

LIBERO now supports two control modes: relative and absolute. This matters because different VLA checkpoints are trained with different mode of action to output hence control parameterizations.

|

||||

You can switch them with: `env.control_mode = "relative"` and `env.control_mode = "absolute"`

|

||||

```bash

|

||||

--env.control_mode=relative # or "absolute"

|

||||

```

|

||||

|

||||

### Policy inputs and outputs

|

||||

|

||||

When using LIBERO through LeRobot, policies interact with the environment via **observations** and **actions**:

|

||||

**Observations:**

|

||||

|

||||

- **Observations**

|

||||

- `observation.state` – proprioceptive features (agent state).

|

||||

- `observation.images.image` – main camera view (`agentview_image`).

|

||||

- `observation.images.image2` – wrist camera view (`robot0_eye_in_hand_image`).

|

||||

- `observation.state` — 8-dim proprioceptive features (eef position, axis-angle orientation, gripper qpos)

|

||||

- `observation.images.image` — main camera view (`agentview_image`), HWC uint8

|

||||

- `observation.images.image2` — wrist camera view (`robot0_eye_in_hand_image`), HWC uint8

|

||||

|

||||

⚠️ **Note:** LeRobot enforces the `.images.*` prefix for any multi-modal visual features. Always ensure that your policy config `input_features` use the same naming keys, and that your dataset metadata keys follow this convention during evaluation.

|

||||

If your data contains different keys, you must rename the observations to match what the policy expects, since naming keys are encoded inside the normalization statistics layer.

|

||||

This will be fixed with the upcoming Pipeline PR.

|

||||

<Tip warning={true}>

|

||||

LeRobot enforces the `.images.*` prefix for visual features. Ensure your

|

||||

policy config `input_features` use the same naming keys, and that your dataset

|

||||

metadata keys follow this convention. If your data contains different keys,

|

||||

you must rename the observations to match what the policy expects, since

|

||||

naming keys are encoded inside the normalization statistics layer.

|

||||

</Tip>

|

||||

|

||||

- **Actions**

|

||||

- Continuous control values in a `Box(-1, 1, shape=(7,))` space.

|

||||

**Actions:**

|

||||

|

||||

We also provide a notebook for quick testing:

|

||||

Training with LIBERO

|

||||

- Continuous control in `Box(-1, 1, shape=(7,))` — 6D end-effector delta + 1D gripper

|

||||

|

||||

## Training with LIBERO

|

||||

### Recommended evaluation episodes

|

||||

|

||||

When training on LIBERO tasks, make sure your dataset parquet and metadata keys follow the LeRobot convention.

|

||||

For reproducible benchmarking, use **10 episodes per task** across all four standard suites (Spatial, Object, Goal, Long). This gives 400 total episodes and matches the protocol used for published results.

|

||||

|

||||

The environment expects:

|

||||

## Training

|

||||

|

||||

- `observation.state` → 8-dim agent state

|

||||

- `observation.images.image` → main camera (`agentview_image`)

|

||||

- `observation.images.image2` → wrist camera (`robot0_eye_in_hand_image`)

|

||||

### Dataset

|

||||

|

||||

⚠️ Cleaning the dataset upfront is **cleaner and more efficient** than remapping keys inside the code.

|

||||

To avoid potential mismatches and key errors, we provide a **preprocessed LIBERO dataset** that is fully compatible with the current LeRobot codebase and requires no additional manipulation:

|

||||

👉 [HuggingFaceVLA/libero](https://huggingface.co/datasets/HuggingFaceVLA/libero)

|

||||

We provide a preprocessed LIBERO dataset fully compatible with LeRobot:

|

||||

|

||||

For reference, here is the **original dataset** published by Physical Intelligence:

|

||||

👉 [physical-intelligence/libero](https://huggingface.co/datasets/physical-intelligence/libero)

|

||||

- [HuggingFaceVLA/libero](https://huggingface.co/datasets/HuggingFaceVLA/libero)

|

||||

|

||||

---

|

||||

For reference, the original dataset published by Physical Intelligence:

|

||||

|

||||

- [physical-intelligence/libero](https://huggingface.co/datasets/physical-intelligence/libero)

|

||||

|

||||

### Example training command

|

||||

|

||||

@@ -121,52 +143,39 @@ lerobot-train \

|

||||

--batch_size=4 \

|

||||

--eval.batch_size=1 \

|

||||

--eval.n_episodes=1 \

|

||||

--eval_freq=1000 \

|

||||

--eval_freq=1000

|

||||

```

|

||||

|

||||

---

|

||||

## Reproducing published results

|

||||

|

||||

### Note on rendering

|

||||

We reproduce the results of Pi0.5 on the LIBERO benchmark. We take the Physical Intelligence LIBERO base model (`pi05_libero`) and finetune for an additional 6k steps in bfloat16, with batch size of 256 on 8 H100 GPUs using the [HuggingFace LIBERO dataset](https://huggingface.co/datasets/HuggingFaceVLA/libero).

|

||||

|

||||

LeRobot uses MuJoCo for simulation. You need to set the rendering backend before training or evaluation:

|

||||

The finetuned model: [lerobot/pi05_libero_finetuned](https://huggingface.co/lerobot/pi05_libero_finetuned)

|

||||

|

||||

- `export MUJOCO_GL=egl` → for headless servers (e.g. HPC, cloud)

|

||||

|

||||

## Reproducing π₀.₅ results

|

||||

|

||||

We reproduce the results of π₀.₅ on the LIBERO benchmark using the LeRobot implementation. We take the Physical Intelligence LIBERO base model (`pi05_libero`) and finetune for an additional 6k steps in bfloat16, with batch size of 256 on 8 H100 GPUs using the [HuggingFace LIBERO dataset](https://huggingface.co/datasets/HuggingFaceVLA/libero).

|

||||

|

||||

The finetuned model can be found here:

|

||||

|

||||

- **π₀.₅ LIBERO**: [lerobot/pi05_libero_finetuned](https://huggingface.co/lerobot/pi05_libero_finetuned)

|

||||

|

||||

We then evaluate the finetuned model using the LeRobot LIBERO implementation, by running the following command:

|

||||

### Evaluation command

|

||||

|

||||

```bash

|

||||

lerobot-eval \

|

||||

--output_dir=/logs/ \

|

||||

--output_dir=./eval_logs/ \

|

||||

--env.type=libero \

|

||||

--env.task=libero_spatial,libero_object,libero_goal,libero_10 \

|

||||

--eval.batch_size=1 \

|

||||

--eval.n_episodes=10 \

|

||||

--policy.path=pi05_libero_finetuned \

|

||||

--policy.n_action_steps=10 \

|

||||

--output_dir=./eval_logs/ \

|

||||

--env.max_parallel_tasks=1

|

||||

```

|

||||

|

||||

**Note:** We set `n_action_steps=10`, similar to the original OpenPI implementation.

|

||||

We set `n_action_steps=10`, matching the original OpenPI implementation.

|

||||

|

||||

### Results

|

||||

|

||||

We obtain the following results on the LIBERO benchmark:

|

||||

| Model | LIBERO Spatial | LIBERO Object | LIBERO Goal | LIBERO 10 | Average |

|

||||

| ------------------- | -------------- | ------------- | ----------- | --------- | -------- |

|

||||

| **Pi0.5 (LeRobot)** | 97.0 | 99.0 | 98.0 | 96.0 | **97.5** |

|

||||

|

||||

| Model | LIBERO Spatial | LIBERO Object | LIBERO Goal | LIBERO 10 | Average |

|

||||

| -------- | -------------- | ------------- | ----------- | --------- | -------- |

|

||||

| **π₀.₅** | 97.0 | 99.0 | 98.0 | 96.0 | **97.5** |

|

||||

These results are consistent with the [original results](https://github.com/Physical-Intelligence/openpi/tree/main/examples/libero#results) reported by Physical Intelligence:

|

||||

|

||||

These results are consistent with the original [results](https://github.com/Physical-Intelligence/openpi/tree/main/examples/libero#results) reported by Physical Intelligence:

|

||||

|

||||

| Model | LIBERO Spatial | LIBERO Object | LIBERO Goal | LIBERO 10 | Average |

|

||||

| -------- | -------------- | ------------- | ----------- | --------- | --------- |

|

||||

| **π₀.₅** | 98.8 | 98.2 | 98.0 | 92.4 | **96.85** |

|

||||

| Model | LIBERO Spatial | LIBERO Object | LIBERO Goal | LIBERO 10 | Average |

|

||||

| ------------------ | -------------- | ------------- | ----------- | --------- | --------- |

|

||||

| **Pi0.5 (OpenPI)** | 98.8 | 98.2 | 98.0 | 92.4 | **96.85** |

|

||||

|

||||

Reference in New Issue

Block a user