mirror of

https://github.com/huggingface/lerobot.git

synced 2026-05-11 22:59:50 +00:00

Compare commits

205 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 4e671ef080 | |||

| cf9796b2f7 | |||

| 88116b11e1 | |||

| cf0c3f0a9a | |||

| ee48a80e4d | |||

| cb0fb8ad15 | |||

| f79fdf7205 | |||

| a305f5f46a | |||

| 45348d7b69 | |||

| d4c1c123c6 | |||

| da861139a3 | |||

| 4f51f7153c | |||

| 9027c7866f | |||

| c2bf226082 | |||

| f84c20d403 | |||

| 4c4462edea | |||

| 0b710932e2 | |||

| 9a19f8f6f4 | |||

| 3504d17fef | |||

| d35ed3fd83 | |||

| ce5b27d255 | |||

| 9dcb407ba7 | |||

| 5eb5bf7164 | |||

| 65fb5d3b1a | |||

| d6a24e2882 | |||

| d51bbe9492 | |||

| d8c875e069 | |||

| eff5b90542 | |||

| a1a3fa435d | |||

| 79c3466f0f | |||

| e1d433cbfc | |||

| 16e82fd29f | |||

| ae57fe2d33 | |||

| e3306951c0 | |||

| 10e36f2453 | |||

| 9204a8bccd | |||

| 43eedf62e4 | |||

| c51d40ad56 | |||

| 5c1d930a34 | |||

| 8d20ca1625 | |||

| e4df9ccb63 | |||

| 086815edb7 | |||

| c9243c29b0 | |||

| e7617076ca | |||

| 221e5862ea | |||

| 1e1b010257 | |||

| def71cc439 | |||

| 4557655ab1 | |||

| 28298fbe78 | |||

| f84affec23 | |||

| dad0babbf5 | |||

| fc5cd05fb0 | |||

| d01b060d24 | |||

| 7da15ba069 | |||

| b0a5b88c21 | |||

| 42fbcc89c5 | |||

| 9767120eb4 | |||

| 852713dc84 | |||

| 1f38712c95 | |||

| 0ffc5b4741 | |||

| a1b1643ff6 | |||

| 7739fe12e4 | |||

| be9bdc242f | |||

| 195cc79c49 | |||

| f8d42cc038 | |||

| 1797dea3d5 | |||

| 825c0666a9 | |||

| 47bc670ad2 | |||

| aa505d4192 | |||

| e380653c62 | |||

| bf5c037959 | |||

| 1234e71cfb | |||

| b1ff7132c1 | |||

| b357a8c4d8 | |||

| 0be53ef3e1 | |||

| aed90c8042 | |||

| 0b5da92a58 | |||

| 599218fe9a | |||

| 2507341a32 | |||

| bde397e891 | |||

| 76e260c401 | |||

| 5179515d81 | |||

| 8ad00d1ee7 | |||

| 7440d772ff | |||

| a4fc02a636 | |||

| f5c39d6292 | |||

| 3f616f0ebe | |||

| 9698e74e88 | |||

| 04d55e4670 | |||

| 7dce022a05 | |||

| cc05067a76 | |||

| bead25a58a | |||

| c877e98658 | |||

| a4c88d6340 | |||

| 34ca077d78 | |||

| 2a901f8134 | |||

| 450be9d7d1 | |||

| 681be962ae | |||

| b16e18f978 | |||

| 652e3cb859 | |||

| 2a5c757d58 | |||

| 6d4e983197 | |||

| ecda7482c7 | |||

| 7124d471c1 | |||

| a14af62ee3 | |||

| ac80f1f081 | |||

| feb3fed5e8 | |||

| 8d5f519fcb | |||

| b9d3c34ae4 | |||

| 5f759b1637 | |||

| 6a75b4761a | |||

| e5ade5565d | |||

| 0524551f52 | |||

| 862bc7ef85 | |||

| d38792d6e5 | |||

| db3cf0158c | |||

| 0535f2a59a | |||

| 2805ae347c | |||

| 28ef6fcd14 | |||

| 7fc7ec75bb | |||

| 87890cbf38 | |||

| 5326ffe77e | |||

| a1734cf575 | |||

| 82f300e880 | |||

| 3e7c9d7afc | |||

| e9cb779eab | |||

| 8ff95be04c | |||

| f02ce69df0 | |||

| 1feb7b5d88 | |||

| fbe9009db2 | |||

| c0013b130b | |||

| c4763f61a1 | |||

| b95c219d96 | |||

| 9b1138171e | |||

| 023b8f3466 | |||

| 1cad87ebd2 | |||

| 99de7567e6 | |||

| 21baa8fa02 | |||

| 8b4a5368b3 | |||

| f5c6b03b61 | |||

| e7be2fd113 | |||

| b632490b4b | |||

| 9a9c7208d2 | |||

| 427b97d198 | |||

| 2c2bb1e8bf | |||

| 4b24f94225 | |||

| 670a278cbc | |||

| fc74001202 | |||

| f14ac5d486 | |||

| 7bd0d62ce5 | |||

| 7eccefe235 | |||

| b72274066e | |||

| 20f2910b63 | |||

| fd4ae3466b | |||

| 7beb040e8e | |||

| 05bd18f453 | |||

| 8077456c00 | |||

| 5595887fd0 | |||

| 41959389b6 | |||

| 2c4e888c7f | |||

| 5ced72e6b8 | |||

| 907023f9f7 | |||

| 4ba23ea029 | |||

| 409ac0baca | |||

| 699363f9fc | |||

| ae7a54de57 | |||

| fb9139b882 | |||

| 9fe3a3fb17 | |||

| 26cb9a24c3 | |||

| 77106697c3 | |||

| 75bc44c166 | |||

| f2b79656eb | |||

| 14c2ece004 | |||

| 35612c61e1 | |||

| f7bb3e2d90 | |||

| 1e0d667a22 | |||

| 33969a0337 | |||

| fa26290e8c | |||

| e9f7f5127b | |||

| 097842c70f | |||

| 3b8a3a32a0 | |||

| 1c56779dd9 | |||

| 83a4338f8b | |||

| 730c7b2f35 | |||

| 116059a43e | |||

| b08149a113 | |||

| c227107f60 | |||

| 01dc289f3d | |||

| 6830ca7645 | |||

| ed42c71fc3 | |||

| e0139065bd | |||

| e509f255af | |||

| e2fcd140b0 | |||

| 2a7a0e6129 | |||

| 9f33791b19 | |||

| 453e0a995f | |||

| 8ebf79c494 | |||

| 8774aec304 | |||

| ac742c9f0d | |||

| cd13f1ecfd | |||

| 9aa632968f | |||

| 62caaf07b0 | |||

| 3355f04ca6 | |||

| 769f531603 | |||

| f6c7287ae7 |

@@ -25,7 +25,7 @@ body:

|

||||

id: system-info

|

||||

attributes:

|

||||

label: System Info

|

||||

description: Please share your LeRobot configuration by running `lerobot-info` (if installed) or `python -m lerobot.scripts.display_sys_info` (if not installed) and pasting the output below.

|

||||

description: If needed, you can share your lerobot configuration with us by running `python -m lerobot.scripts.display_sys_info` and copy-pasting its outputs below

|

||||

render: Shell

|

||||

placeholder: lerobot version, OS, python version, numpy version, torch version, and lerobot's configuration

|

||||

validations:

|

||||

|

||||

@@ -30,7 +30,7 @@ pytest -sx tests/test_stuff.py::test_something

|

||||

```

|

||||

|

||||

```bash

|

||||

lerobot-train --some.option=true

|

||||

python -m lerobot.scripts.train --some.option=true

|

||||

```

|

||||

|

||||

## SECTION TO REMOVE BEFORE SUBMITTING YOUR PR

|

||||

|

||||

@@ -29,8 +29,8 @@ on:

|

||||

env:

|

||||

UV_VERSION: "0.8.0"

|

||||

PYTHON_VERSION: "3.10"

|

||||

DOCKER_IMAGE_NAME_CPU: huggingface/lerobot-cpu:latest

|

||||

DOCKER_IMAGE_NAME_GPU: huggingface/lerobot-gpu:latest

|

||||

DOCKER_IMAGE_NAME_CPU: huggingface/lerobot-gpu:latest

|

||||

DOCKER_IMAGE_NAME_GPU: huggingface/lerobot-cpu:latest

|

||||

|

||||

# Ensures that only the latest commit is built, canceling older runs.

|

||||

concurrency:

|

||||

|

||||

@@ -1,68 +0,0 @@

|

||||

# Copyright 2025 The HuggingFace Inc. team. All rights reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

# This workflow handles closing stale issues and PRs.

|

||||

name: Stale

|

||||

on:

|

||||

# Allows running this workflow manually from the Actions tab

|

||||

workflow_dispatch:

|

||||

|

||||

# Runs at 02:00

|

||||

schedule:

|

||||

- cron: "0 2 * * *"

|

||||

|

||||

env:

|

||||

CLOSE_ISSUE_MESSAGE: >

|

||||

This issue was closed because it has been stalled for 14 days with no activity.

|

||||

Feel free to reopen if is still relevant, or to ping a collaborator if you have any questions.

|

||||

CLOSE_PR_MESSAGE: >

|

||||

This PR was closed because it has been stalled for 14 days with no activity.

|

||||

Feel free to reopen if is still relevant, or to ping a collaborator if you have any questions.

|

||||

WARN_ISSUE_MESSAGE: >

|

||||

This issue has been automatically marked as stale because it has not had

|

||||

recent activity (1 year). It will be closed if no further activity occurs.

|

||||

Thank you for your contributions.

|

||||

WARN_PR_MESSAGE: >

|

||||

This PR has been automatically marked as stale because it has not had

|

||||

recent activity (1 year). It will be closed if no further activity occurs.

|

||||

Thank you for your contributions.

|

||||

|

||||

jobs:

|

||||

# This job runs the actions/stale action to close stale issues and PRs.

|

||||

stale:

|

||||

name: Close Stale Issues and PRs

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

actions: write

|

||||

contents: write # only for delete-branch option

|

||||

issues: write

|

||||

pull-requests: write

|

||||

steps:

|

||||

- uses: actions/stale@v10

|

||||

with:

|

||||

repo-token: ${{ secrets.GITHUB_TOKEN }}

|

||||

stale-issue-label: stale

|

||||

stale-pr-label: stale

|

||||

exempt-issue-labels: never-stale

|

||||

exempt-pr-labels: never-stale

|

||||

days-before-issue-stale: 180 # TODO(Steven): Will modify this to 90 after initial cleanup

|

||||

days-before-issue-close: 14

|

||||

days-before-pr-stale: 180

|

||||

days-before-pr-close: 14

|

||||

delete-branch: true

|

||||

close-issue-message: ${{ env.CLOSE_ISSUE_MESSAGE }}

|

||||

close-pr-message: ${{ env.CLOSE_PR_MESSAGE }}

|

||||

stale-issue-message: ${{ env.WARN_ISSUE_MESSAGE }}

|

||||

stale-pr-message: ${{ env.WARN_PR_MESSAGE }}

|

||||

operations-per-run: 500

|

||||

@@ -173,7 +173,3 @@ outputs/

|

||||

|

||||

# Dev folders

|

||||

.cache/*

|

||||

*.stl

|

||||

*.urdf

|

||||

*.xml

|

||||

*.part

|

||||

|

||||

@@ -44,7 +44,7 @@ test-end-to-end:

|

||||

${MAKE} DEVICE=$(DEVICE) test-smolvla-ete-eval

|

||||

|

||||

test-act-ete-train:

|

||||

lerobot-train \

|

||||

python -m lerobot.scripts.train \

|

||||

--policy.type=act \

|

||||

--policy.dim_model=64 \

|

||||

--policy.n_action_steps=20 \

|

||||

@@ -68,12 +68,12 @@ test-act-ete-train:

|

||||

--output_dir=tests/outputs/act/

|

||||

|

||||

test-act-ete-train-resume:

|

||||

lerobot-train \

|

||||

python -m lerobot.scripts.train \

|

||||

--config_path=tests/outputs/act/checkpoints/000002/pretrained_model/train_config.json \

|

||||

--resume=true

|

||||

|

||||

test-act-ete-eval:

|

||||

lerobot-eval \

|

||||

python -m lerobot.scripts.eval \

|

||||

--policy.path=tests/outputs/act/checkpoints/000004/pretrained_model \

|

||||

--policy.device=$(DEVICE) \

|

||||

--env.type=aloha \

|

||||

@@ -82,7 +82,7 @@ test-act-ete-eval:

|

||||

--eval.batch_size=1

|

||||

|

||||

test-diffusion-ete-train:

|

||||

lerobot-train \

|

||||

python -m lerobot.scripts.train \

|

||||

--policy.type=diffusion \

|

||||

--policy.down_dims='[64,128,256]' \

|

||||

--policy.diffusion_step_embed_dim=32 \

|

||||

@@ -106,7 +106,7 @@ test-diffusion-ete-train:

|

||||

--output_dir=tests/outputs/diffusion/

|

||||

|

||||

test-diffusion-ete-eval:

|

||||

lerobot-eval \

|

||||

python -m lerobot.scripts.eval \

|

||||

--policy.path=tests/outputs/diffusion/checkpoints/000002/pretrained_model \

|

||||

--policy.device=$(DEVICE) \

|

||||

--env.type=pusht \

|

||||

@@ -115,7 +115,7 @@ test-diffusion-ete-eval:

|

||||

--eval.batch_size=1

|

||||

|

||||

test-tdmpc-ete-train:

|

||||

lerobot-train \

|

||||

python -m lerobot.scripts.train \

|

||||

--policy.type=tdmpc \

|

||||

--policy.device=$(DEVICE) \

|

||||

--policy.push_to_hub=false \

|

||||

@@ -137,7 +137,7 @@ test-tdmpc-ete-train:

|

||||

--output_dir=tests/outputs/tdmpc/

|

||||

|

||||

test-tdmpc-ete-eval:

|

||||

lerobot-eval \

|

||||

python -m lerobot.scripts.eval \

|

||||

--policy.path=tests/outputs/tdmpc/checkpoints/000002/pretrained_model \

|

||||

--policy.device=$(DEVICE) \

|

||||

--env.type=xarm \

|

||||

@@ -148,7 +148,7 @@ test-tdmpc-ete-eval:

|

||||

|

||||

|

||||

test-smolvla-ete-train:

|

||||

lerobot-train \

|

||||

python -m lerobot.scripts.train \

|

||||

--policy.type=smolvla \

|

||||

--policy.n_action_steps=20 \

|

||||

--policy.chunk_size=20 \

|

||||

@@ -171,7 +171,7 @@ test-smolvla-ete-train:

|

||||

--output_dir=tests/outputs/smolvla/

|

||||

|

||||

test-smolvla-ete-eval:

|

||||

lerobot-eval \

|

||||

python -m lerobot.scripts.eval \

|

||||

--policy.path=tests/outputs/smolvla/checkpoints/000004/pretrained_model \

|

||||

--policy.device=$(DEVICE) \

|

||||

--env.type=aloha \

|

||||

|

||||

@@ -6,7 +6,7 @@

|

||||

|

||||

<div align="center">

|

||||

|

||||

[](https://github.com/huggingface/lerobot/actions/workflows/nightly.yml?query=branch%3Amain)

|

||||

[](https://github.com/huggingface/lerobot/actions/workflows/nighty.yml?query=branch%3Amain)

|

||||

[](https://www.python.org/downloads/)

|

||||

[](https://github.com/huggingface/lerobot/blob/main/LICENSE)

|

||||

[](https://pypi.org/project/lerobot/)

|

||||

@@ -202,7 +202,7 @@ Check out [example 1](https://github.com/huggingface/lerobot/blob/main/examples/

|

||||

You can also locally visualize episodes from a dataset on the hub by executing our script from the command line:

|

||||

|

||||

```bash

|

||||

lerobot-dataset-viz \

|

||||

python -m lerobot.scripts.visualize_dataset \

|

||||

--repo-id lerobot/pusht \

|

||||

--episode-index 0

|

||||

```

|

||||

@@ -210,7 +210,7 @@ lerobot-dataset-viz \

|

||||

or from a dataset in a local folder with the `root` option and the `--local-files-only` (in the following case the dataset will be searched for in `./my_local_data_dir/lerobot/pusht`)

|

||||

|

||||

```bash

|

||||

lerobot-dataset-viz \

|

||||

python -m lerobot.scripts.visualize_dataset \

|

||||

--repo-id lerobot/pusht \

|

||||

--root ./my_local_data_dir \

|

||||

--local-files-only 1 \

|

||||

@@ -221,13 +221,13 @@ It will open `rerun.io` and display the camera streams, robot states and actions

|

||||

|

||||

https://github-production-user-asset-6210df.s3.amazonaws.com/4681518/328035972-fd46b787-b532-47e2-bb6f-fd536a55a7ed.mov?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=AKIAVCODYLSA53PQK4ZA%2F20240505%2Fus-east-1%2Fs3%2Faws4_request&X-Amz-Date=20240505T172924Z&X-Amz-Expires=300&X-Amz-Signature=d680b26c532eeaf80740f08af3320d22ad0b8a4e4da1bcc4f33142c15b509eda&X-Amz-SignedHeaders=host&actor_id=24889239&key_id=0&repo_id=748713144

|

||||

|

||||

Our script can also visualize datasets stored on a distant server. See `lerobot-dataset-viz --help` for more instructions.

|

||||

Our script can also visualize datasets stored on a distant server. See `python -m lerobot.scripts.visualize_dataset --help` for more instructions.

|

||||

|

||||

### The `LeRobotDataset` format

|

||||

|

||||

A dataset in `LeRobotDataset` format is very simple to use. It can be loaded from a repository on the Hugging Face hub or a local folder simply with e.g. `dataset = LeRobotDataset("lerobot/aloha_static_coffee")` and can be indexed into like any Hugging Face and PyTorch dataset. For instance `dataset[0]` will retrieve a single temporal frame from the dataset containing observation(s) and an action as PyTorch tensors ready to be fed to a model.

|

||||

|

||||

A specificity of `LeRobotDataset` is that, rather than retrieving a single frame by its index, we can retrieve several frames based on their temporal relationship with the indexed frame, by setting `delta_timestamps` to a list of relative times with respect to the indexed frame. For example, with `delta_timestamps = {"observation.image": [-1, -0.5, -0.2, 0]}` one can retrieve, for a given index, 4 frames: 3 "previous" frames 1 second, 0.5 seconds, and 0.2 seconds before the indexed frame, and the indexed frame itself (corresponding to the 0 entry). See example [1_load_lerobot_dataset.py](https://github.com/huggingface/lerobot/blob/main/examples/dataset/load_lerobot_dataset.py) for more details on `delta_timestamps`.

|

||||

A specificity of `LeRobotDataset` is that, rather than retrieving a single frame by its index, we can retrieve several frames based on their temporal relationship with the indexed frame, by setting `delta_timestamps` to a list of relative times with respect to the indexed frame. For example, with `delta_timestamps = {"observation.image": [-1, -0.5, -0.2, 0]}` one can retrieve, for a given index, 4 frames: 3 "previous" frames 1 second, 0.5 seconds, and 0.2 seconds before the indexed frame, and the indexed frame itself (corresponding to the 0 entry). See example [1_load_lerobot_dataset.py](https://github.com/huggingface/lerobot/blob/main/examples/1_load_lerobot_dataset.py) for more details on `delta_timestamps`.

|

||||

|

||||

Under the hood, the `LeRobotDataset` format makes use of several ways to serialize data which can be useful to understand if you plan to work more closely with this format. We tried to make a flexible yet simple dataset format that would cover most type of features and specificities present in reinforcement learning and robotics, in simulation and in real-world, with a focus on cameras and robot states but easily extended to other types of sensory inputs as long as they can be represented by a tensor.

|

||||

|

||||

@@ -246,29 +246,19 @@ dataset attributes:

|

||||

│ ├ timestamp (float32): timestamp in the episode

|

||||

│ ├ next.done (bool): indicates the end of an episode ; True for the last frame in each episode

|

||||

│ └ index (int64): general index in the whole dataset

|

||||

├ meta: a LeRobotDatasetMetadata object containing:

|

||||

│ ├ info: a dictionary of metadata on the dataset

|

||||

│ │ ├ codebase_version (str): this is to keep track of the codebase version the dataset was created with

|

||||

│ │ ├ fps (int): frame per second the dataset is recorded/synchronized to

|

||||

│ │ ├ features (dict): all features contained in the dataset with their shapes and types

|

||||

│ │ ├ total_episodes (int): total number of episodes in the dataset

|

||||

│ │ ├ total_frames (int): total number of frames in the dataset

|

||||

│ │ ├ robot_type (str): robot type used for recording

|

||||

│ │ ├ data_path (str): formattable string for the parquet files

|

||||

│ │ └ video_path (str): formattable string for the video files (if using videos)

|

||||

│ ├ episodes: a DataFrame containing episode metadata with columns:

|

||||

│ │ ├ episode_index (int): index of the episode

|

||||

│ │ ├ tasks (list): list of tasks for this episode

|

||||

│ │ ├ length (int): number of frames in this episode

|

||||

│ │ ├ dataset_from_index (int): start index of this episode in the dataset

|

||||

│ │ └ dataset_to_index (int): end index of this episode in the dataset

|

||||

│ ├ stats: a dictionary of statistics (max, mean, min, std) for each feature in the dataset, for instance

|

||||

│ │ ├ observation.images.front_cam: {'max': tensor with same number of dimensions (e.g. `(c, 1, 1)` for images, `(c,)` for states), etc.}

|

||||

│ │ └ ...

|

||||

│ └ tasks: a DataFrame containing task information with task names as index and task_index as values

|

||||

├ root (Path): local directory where the dataset is stored

|

||||

├ image_transforms (Callable): optional image transformations to apply to visual modalities

|

||||

└ delta_timestamps (dict): optional delta timestamps for temporal queries

|

||||

├ episode_data_index: contains 2 tensors with the start and end indices of each episode

|

||||

│ ├ from (1D int64 tensor): first frame index for each episode — shape (num episodes,) starts with 0

|

||||

│ └ to: (1D int64 tensor): last frame index for each episode — shape (num episodes,)

|

||||

├ stats: a dictionary of statistics (max, mean, min, std) for each feature in the dataset, for instance

|

||||

│ ├ observation.images.cam_high: {'max': tensor with same number of dimensions (e.g. `(c, 1, 1)` for images, `(c,)` for states), etc.}

|

||||

│ ...

|

||||

├ info: a dictionary of metadata on the dataset

|

||||

│ ├ codebase_version (str): this is to keep track of the codebase version the dataset was created with

|

||||

│ ├ fps (float): frame per second the dataset is recorded/synchronized to

|

||||

│ ├ video (bool): indicates if frames are encoded in mp4 video files to save space or stored as png files

|

||||

│ └ encoding (dict): if video, this documents the main options that were used with ffmpeg to encode the videos

|

||||

├ videos_dir (Path): where the mp4 videos or png images are stored/accessed

|

||||

└ camera_keys (list of string): the keys to access camera features in the item returned by the dataset (e.g. `["observation.images.cam_high", ...]`)

|

||||

```

|

||||

|

||||

A `LeRobotDataset` is serialised using several widespread file formats for each of its parts, namely:

|

||||

@@ -279,13 +269,49 @@ A `LeRobotDataset` is serialised using several widespread file formats for each

|

||||

|

||||

Dataset can be uploaded/downloaded from the HuggingFace hub seamlessly. To work on a local dataset, you can specify its location with the `root` argument if it's not in the default `~/.cache/huggingface/lerobot` location.

|

||||

|

||||

### Evaluate a pretrained policy

|

||||

|

||||

Check out [example 2](https://github.com/huggingface/lerobot/blob/main/examples/2_evaluate_pretrained_policy.py) that illustrates how to download a pretrained policy from Hugging Face hub, and run an evaluation on its corresponding environment.

|

||||

|

||||

We also provide a more capable script to parallelize the evaluation over multiple environments during the same rollout. Here is an example with a pretrained model hosted on [lerobot/diffusion_pusht](https://huggingface.co/lerobot/diffusion_pusht):

|

||||

|

||||

```bash

|

||||

python -m lerobot.scripts.eval \

|

||||

--policy.path=lerobot/diffusion_pusht \

|

||||

--env.type=pusht \

|

||||

--eval.batch_size=10 \

|

||||

--eval.n_episodes=10 \

|

||||

--policy.use_amp=false \

|

||||

--policy.device=cuda

|

||||

```

|

||||

|

||||

Note: After training your own policy, you can re-evaluate the checkpoints with:

|

||||

|

||||

```bash

|

||||

python -m lerobot.scripts.eval --policy.path={OUTPUT_DIR}/checkpoints/last/pretrained_model

|

||||

```

|

||||

|

||||

See `python -m lerobot.scripts.eval --help` for more instructions.

|

||||

|

||||

### Train your own policy

|

||||

|

||||

Check out [example 3](https://github.com/huggingface/lerobot/blob/main/examples/3_train_policy.py) that illustrates how to train a model using our core library in python, and [example 4](https://github.com/huggingface/lerobot/blob/main/examples/4_train_policy_with_script.md) that shows how to use our training script from command line.

|

||||

|

||||

To use wandb for logging training and evaluation curves, make sure you've run `wandb login` as a one-time setup step. Then, when running the training command above, enable WandB in the configuration by adding `--wandb.enable=true`.

|

||||

|

||||

A link to the wandb logs for the run will also show up in yellow in your terminal. Here is an example of what they look like in your browser. Please also check [here](https://github.com/huggingface/lerobot/blob/main/examples/4_train_policy_with_script.md#typical-logs-and-metrics) for the explanation of some commonly used metrics in logs.

|

||||

|

||||

\<img src="https://raw.githubusercontent.com/huggingface/lerobot/main/media/wandb.png" alt="WandB logs example"\>

|

||||

|

||||

Note: For efficiency, during training every checkpoint is evaluated on a low number of episodes. You may use `--eval.n_episodes=500` to evaluate on more episodes than the default. Or, after training, you may want to re-evaluate your best checkpoints on more episodes or change the evaluation settings. See `python -m lerobot.scripts.eval --help` for more instructions.

|

||||

|

||||

#### Reproduce state-of-the-art (SOTA)

|

||||

|

||||

We provide some pretrained policies on our [hub page](https://huggingface.co/lerobot) that can achieve state-of-the-art performances.

|

||||

You can reproduce their training by loading the config from their run. Simply running:

|

||||

|

||||

```bash

|

||||

lerobot-train --config_path=lerobot/diffusion_pusht

|

||||

python -m lerobot.scripts.train --config_path=lerobot/diffusion_pusht

|

||||

```

|

||||

|

||||

reproduces SOTA results for Diffusion Policy on the PushT task.

|

||||

@@ -337,7 +363,3 @@ If you want, you can cite this work with:

|

||||

## Star History

|

||||

|

||||

[](https://star-history.com/#huggingface/lerobot&Timeline)

|

||||

|

||||

```

|

||||

|

||||

```

|

||||

|

||||

@@ -1,378 +0,0 @@

|

||||

"""

|

||||

Benchmark memory footprint and inference latency of a policy on arbitrary devices.

|

||||

|

||||

This script loads a pretrained policy directly (similar to the async inference server)

|

||||

and generates dummy input data based on the policy's input_features to perform

|

||||

accurate benchmarking without requiring datasets.

|

||||

"""

|

||||

|

||||

import argparse

|

||||

import os

|

||||

import signal

|

||||

import statistics

|

||||

from contextlib import contextmanager

|

||||

from datetime import datetime

|

||||

from pathlib import Path

|

||||

|

||||

import psutil

|

||||

import torch

|

||||

from tqdm import tqdm

|

||||

|

||||

from lerobot.configs.types import FeatureType

|

||||

from lerobot.policies.factory import get_policy_class

|

||||

from lerobot.policies.pretrained import PreTrainedPolicy

|

||||

|

||||

|

||||

class TimeoutException:

|

||||

pass

|

||||

|

||||

|

||||

@contextmanager

|

||||

def timeout(seconds):

|

||||

def signal_handler(signum, frame):

|

||||

raise TimeoutException(f"Timed out after {seconds} seconds")

|

||||

|

||||

# On Windows, signal is not available, so we can't use this timeout mechanism

|

||||

if not hasattr(signal, "SIGALRM"):

|

||||

yield

|

||||

return

|

||||

|

||||

old_handler = signal.signal(signal.SIGALRM, signal_handler)

|

||||

try:

|

||||

# signal.alarm expects integer seconds

|

||||

# for float seconds, we can use setitimer

|

||||

signal.setitimer(signal.ITIMER_REAL, seconds)

|

||||

yield

|

||||

finally:

|

||||

signal.setitimer(signal.ITIMER_REAL, 0)

|

||||

signal.signal(signal.SIGALRM, old_handler)

|

||||

|

||||

|

||||

def bytes_to_human(n: int) -> str:

|

||||

for unit in ["B", "KB", "MB", "GB", "TB"]:

|

||||

if n < 1024:

|

||||

return f"{n:.2f} {unit}"

|

||||

n /= 1024

|

||||

return f"{n:.2f} PB"

|

||||

|

||||

|

||||

def percentile(values: list[float], p: float) -> float:

|

||||

if not values:

|

||||

return float("nan")

|

||||

k = (len(values) - 1) * (p / 100.0)

|

||||

f = int(k)

|

||||

c = min(f + 1, len(values) - 1)

|

||||

if f == c:

|

||||

return values[f]

|

||||

return values[f] + (values[c] - values[f]) * (k - f)

|

||||

|

||||

|

||||

def generate_dummy_observation(input_features: dict, device: str = "cpu") -> dict:

|

||||

"""Generate dummy observation data based on policy input features."""

|

||||

dummy_obs = {}

|

||||

|

||||

for key, feature in input_features.items():

|

||||

shape = feature.shape

|

||||

|

||||

if feature.type == FeatureType.VISUAL:

|

||||

# Images: random values in [0, 1] range (already normalized)

|

||||

dummy_obs[key] = torch.rand(shape, dtype=torch.float32, device=device)

|

||||

elif feature.type in [FeatureType.STATE, FeatureType.ACTION, FeatureType.ENV]:

|

||||

# State/action/env: random normal distribution

|

||||

dummy_obs[key] = torch.randn(shape, dtype=torch.float32, device=device)

|

||||

else:

|

||||

# Default: random normal for unknown types

|

||||

dummy_obs[key] = torch.randn(shape, dtype=torch.float32, device=device)

|

||||

|

||||

# Add batch dimension

|

||||

for key in dummy_obs:

|

||||

dummy_obs[key] = dummy_obs[key].unsqueeze(0)

|

||||

|

||||

# Add task string for language-conditioned policies

|

||||

dummy_obs["task"] = ""

|

||||

|

||||

return dummy_obs

|

||||

|

||||

|

||||

def main():

|

||||

parser = argparse.ArgumentParser(description="Policy inference benchmark")

|

||||

parser.add_argument(

|

||||

"--policy-id", type=str, required=True, help="Model ID or local path to pretrained policy"

|

||||

)

|

||||

parser.add_argument(

|

||||

"--policy-type", type=str, required=True, help="Type of policy (smolvla, act, diffusion, etc.)"

|

||||

)

|

||||

parser.add_argument(

|

||||

"--device", type=str, default="mps", choices=["cuda", "cpu", "mps"], help="Device to run on"

|

||||

)

|

||||

parser.add_argument("--seed", type=int, default=42, help="Random seed")

|

||||

parser.add_argument(

|

||||

"--num-samples", type=int, default=100, help="Number of inference samples to benchmark"

|

||||

)

|

||||

parser.add_argument("--warmup", type=int, default=10, help="Number of warmup samples (not timed)")

|

||||

parser.add_argument(

|

||||

"--output-dir", type=str, default="outputs/benchmarks", help="Directory to save benchmark results"

|

||||

)

|

||||

parser.add_argument(

|

||||

"--timeout",

|

||||

type=float,

|

||||

default=0.3,

|

||||

help="Timeout for each inference pass in seconds (default: 0.3s = 300ms)",

|

||||

)

|

||||

args = parser.parse_args()

|

||||

|

||||

# Seed & deterministic-ish setup

|

||||

torch.manual_seed(args.seed)

|

||||

if args.device == "cuda":

|

||||

torch.cuda.manual_seed_all(args.seed)

|

||||

torch.backends.cudnn.benchmark = False

|

||||

torch.backends.cudnn.deterministic = False # leave False to avoid perf cliffs

|

||||

|

||||

# Resolve device availability

|

||||

device = args.device.lower()

|

||||

if device == "cuda" and not torch.cuda.is_available():

|

||||

print("[!] CUDA requested but unavailable. Falling back to CPU.")

|

||||

device = "cpu"

|

||||

elif device == "mps" and not (hasattr(torch.backends, "mps") and torch.backends.mps.is_available()):

|

||||

print("[!] MPS requested but unavailable. Falling back to CPU.")

|

||||

device = "cpu"

|

||||

|

||||

use_cuda = device == "cuda"

|

||||

|

||||

# Create output directory and log file

|

||||

output_dir = Path(args.output_dir)

|

||||

output_dir.mkdir(parents=True, exist_ok=True)

|

||||

timestamp = datetime.now().strftime("%Y%m%d_%H%M%S")

|

||||

policy_name = args.policy_id.replace("/", "_").replace("\\", "_")

|

||||

log_file = output_dir / f"benchmark_{args.policy_type}_{policy_name}_{device}_{timestamp}.txt"

|

||||

|

||||

# Load policy directly from pretrained (similar to async inference server)

|

||||

print(f"Loading policy {args.policy_type} from {args.policy_id}...")

|

||||

policy_class = get_policy_class(args.policy_type)

|

||||

policy: PreTrainedPolicy = policy_class.from_pretrained(args.policy_id)

|

||||

policy.eval()

|

||||

policy.to(device)

|

||||

|

||||

print(f"Policy loaded on {device}")

|

||||

print(f"Input features: {list(policy.config.input_features.keys())}")

|

||||

print(f"Output features: {list(policy.config.output_features.keys())}")

|

||||

|

||||

# Generate dummy observation based on policy input features

|

||||

dummy_observation = generate_dummy_observation(policy.config.input_features, device)

|

||||

dummy_observation["task"] = ""

|

||||

|

||||

# Helper to sync for fair timings

|

||||

def _sync(dev_=device):

|

||||

if dev_ == "cuda" and torch.cuda.is_available():

|

||||

torch.cuda.synchronize()

|

||||

elif dev_ == "mps" and hasattr(torch, "mps"):

|

||||

try:

|

||||

torch.mps.synchronize()

|

||||

except AttributeError:

|

||||

pass # MPS sync not available in this PyTorch version

|

||||

|

||||

# Warmup (to stabilize kernels/caches)

|

||||

print("Warming up...")

|

||||

with torch.no_grad():

|

||||

policy.reset()

|

||||

for _ in range(args.warmup):

|

||||

_ = policy.select_action(dummy_observation)

|

||||

_sync()

|

||||

|

||||

# Memory footprint before timing

|

||||

process = psutil.Process(os.getpid())

|

||||

rss_before = process.memory_info().rss

|

||||

if use_cuda:

|

||||

torch.cuda.reset_peak_memory_stats()

|

||||

|

||||

# PyTorch timing with Event objects for more accurate GPU timing

|

||||

print(f"Running benchmark: {args.num_samples} samples...")

|

||||

|

||||

if use_cuda:

|

||||

# Use CUDA Events for precise GPU timing

|

||||

start_events = []

|

||||

end_events = []

|

||||

timeout_count = 0

|

||||

|

||||

with torch.no_grad():

|

||||

for forward in tqdm(range(args.num_samples), desc="Trials"):

|

||||

start_event = torch.cuda.Event(enable_timing=True)

|

||||

end_event = torch.cuda.Event(enable_timing=True)

|

||||

try:

|

||||

with timeout(args.timeout):

|

||||

start_event.record()

|

||||

_ = policy.select_action(dummy_observation)

|

||||

end_event.record()

|

||||

|

||||

start_events.append(start_event)

|

||||

end_events.append(end_event)

|

||||

except TimeoutException:

|

||||

timeout_count += 1

|

||||

# Add placeholder for timeout

|

||||

start_events.append(None)

|

||||

end_events.append(None)

|

||||

print(f"\n[!] Timeout on forward {forward + 1}")

|

||||

continue

|

||||

|

||||

# Synchronize and collect timing results

|

||||

torch.cuda.synchronize()

|

||||

per_forward_ms = []

|

||||

for start_event, end_event in zip(start_events, end_events, strict=True):

|

||||

if start_event is None:

|

||||

per_forward_ms.append(args.timeout * 1000)

|

||||

else:

|

||||

per_forward_ms.append(start_event.elapsed_time(end_event))

|

||||

|

||||

if timeout_count > 0:

|

||||

print(f"[!] {timeout_count} inference passes timed out (>{args.timeout * 1000:.1f}ms)")

|

||||

|

||||

else:

|

||||

# Use simple time.perf_counter for CPU/MPS timing with timeout

|

||||

import time

|

||||

|

||||

per_forward_ms = []

|

||||

timeout_count = 0

|

||||

|

||||

with torch.no_grad():

|

||||

for sample in tqdm(range(args.num_samples), desc="Samples"):

|

||||

try:

|

||||

with timeout(args.timeout):

|

||||

start_time = time.perf_counter()

|

||||

_ = policy.select_action(dummy_observation)

|

||||

end_time = time.perf_counter()

|

||||

|

||||

per_forward_ms.append((end_time - start_time) * 1000) # Convert to ms

|

||||

except TimeoutException:

|

||||

timeout_count += 1

|

||||

per_forward_ms.append(args.timeout * 1000)

|

||||

print(f"\n[!] Timeout on sample {sample + 1}")

|

||||

continue

|

||||

|

||||

if timeout_count > 0:

|

||||

print(f"[!] {timeout_count} inference passes timed out (>{args.timeout * 1000:.1f}ms)")

|

||||

|

||||

# Memory footprint after timing

|

||||

rss_after = process.memory_info().rss

|

||||

rss_delta = rss_after - rss_before

|

||||

cuda_peak = torch.cuda.max_memory_allocated() if use_cuda else 0

|

||||

|

||||

# Sort timing results for percentile calculations

|

||||

per_forward_ms_sorted = sorted(per_forward_ms)

|

||||

|

||||

mean_ms = statistics.fmean(per_forward_ms) if per_forward_ms else float("nan")

|

||||

std_ms = statistics.pstdev(per_forward_ms) if len(per_forward_ms) > 1 else 0.0

|

||||

min_ms = per_forward_ms_sorted[0] if per_forward_ms_sorted else float("nan")

|

||||

max_ms = per_forward_ms_sorted[-1] if per_forward_ms_sorted else float("nan")

|

||||

p50_ms = percentile(per_forward_ms_sorted, 50)

|

||||

p95_ms = percentile(per_forward_ms_sorted, 95)

|

||||

|

||||

# Model size

|

||||

num_params = sum(p.numel() for p in policy.parameters())

|

||||

|

||||

# Prepare results for logging

|

||||

results = {

|

||||

"timestamp": datetime.now().isoformat(),

|

||||

"policy_type": args.policy_type,

|

||||

"policy_id": args.policy_id,

|

||||

"device": device,

|

||||

"num_trials": args.num_samples,

|

||||

"forwards_per_trial": 1,

|

||||

"warmup": args.warmup,

|

||||

"timeout_ms": args.timeout * 1000,

|

||||

"seed": args.seed,

|

||||

"num_params": num_params,

|

||||

"timeout_count": timeout_count,

|

||||

"latency_mean_ms": mean_ms,

|

||||

"latency_std_ms": std_ms,

|

||||

"latency_min_ms": min_ms,

|

||||

"latency_max_ms": max_ms,

|

||||

"latency_p50_ms": p50_ms,

|

||||

"latency_p95_ms": p95_ms,

|

||||

"cpu_rss_before": rss_before,

|

||||

"cpu_rss_after": rss_after,

|

||||

"cpu_rss_delta": rss_delta,

|

||||

"cuda_peak_alloc": cuda_peak,

|

||||

"input_features": list(policy.config.input_features.keys()),

|

||||

"output_features": list(policy.config.output_features.keys()),

|

||||

}

|

||||

|

||||

# Format and write results to log file

|

||||

log_content = f"""

|

||||

=== LeRobot Policy Inference Benchmark ===

|

||||

Timestamp: {results["timestamp"]}

|

||||

Policy: {results["policy_type"]} ({results["policy_id"]})

|

||||

Device: {results["device"]}

|

||||

Seed: {results["seed"]}

|

||||

|

||||

=== Model Information ===

|

||||

Parameters: {results["num_params"]:,}

|

||||

Input Features: {", ".join(results["input_features"])}

|

||||

Output Features: {", ".join(results["output_features"])}

|

||||

|

||||

=== Benchmark Configuration ===

|

||||

Samples: {results["num_trials"]}

|

||||

Warmup: {results["warmup"]}

|

||||

Total Measurements: {len(per_forward_ms)}

|

||||

Timeout: {results["timeout_ms"]:.1f}ms

|

||||

Timeouts: {results["timeout_count"]} / {results["num_trials"]}

|

||||

|

||||

=== Latency Results (ms) ===

|

||||

Mean: {results["latency_mean_ms"]:.3f}

|

||||

Std Dev: {results["latency_std_ms"]:.3f}

|

||||

Min: {results["latency_min_ms"]:.3f}

|

||||

Max: {results["latency_max_ms"]:.3f}

|

||||

P50: {results["latency_p50_ms"]:.3f}

|

||||

P95: {results["latency_p95_ms"]:.3f}

|

||||

|

||||

=== Memory Footprint ===

|

||||

CPU RSS Before: {bytes_to_human(results["cpu_rss_before"])}

|

||||

CPU RSS After: {bytes_to_human(results["cpu_rss_after"])} (Δ {bytes_to_human(results["cpu_rss_delta"])})

|

||||

"""

|

||||

|

||||

if use_cuda:

|

||||

log_content += f"CUDA Peak: {bytes_to_human(results['cuda_peak_alloc'])} (reset before timing)\n"

|

||||

|

||||

log_content += f"""

|

||||

=== Raw Timing Data (first 20 measurements, ms) ===

|

||||

{", ".join(f"{t:.3f}" for t in per_forward_ms[:20])}

|

||||

{"..." if len(per_forward_ms) > 20 else ""}

|

||||

|

||||

=== Summary Statistics ===

|

||||

Timing Method: {"CUDA Events" if use_cuda else "torch.utils.benchmark.Timer"}

|

||||

Device Available: {torch.cuda.is_available() if device == "cuda" else torch.backends.mps.is_available() if device == "mps" else True}

|

||||

PyTorch Version: {torch.__version__}

|

||||

|

||||

Benchmark completed successfully at {datetime.now().strftime("%Y-%m-%d %H:%M:%S")}

|

||||

"""

|

||||

|

||||

# Write to log file

|

||||

with open(log_file, "w") as f:

|

||||

f.write(log_content)

|

||||

|

||||

# Print to console (shorter version)

|

||||

print("\n=== Inference Benchmark Results ===")

|

||||

print(f"Policy: {args.policy_type} ({args.policy_id})")

|

||||

print(f"Device: {device}")

|

||||

print(f"Samples: {args.num_samples} | Warmup: {args.warmup}")

|

||||

print(f"Model params: {num_params:,}")

|

||||

|

||||

print("\nLatency per forward (ms):")

|

||||

print(f" mean: {mean_ms:.3f} std: {std_ms:.3f}")

|

||||

print(f" min: {min_ms:.3f} max: {max_ms:.3f}")

|

||||

print(f" p50: {p50_ms:.3f} p95: {p95_ms:.3f}")

|

||||

|

||||

print("\nMemory footprint:")

|

||||

print(f" CPU RSS before: {bytes_to_human(rss_before)}")

|

||||

print(f" CPU RSS after : {bytes_to_human(rss_after)} (Δ {bytes_to_human(rss_delta)})")

|

||||

if use_cuda:

|

||||

print(

|

||||

f" CUDA peak allocated: {bytes_to_human(cuda_peak)} "

|

||||

f"(reset by reset_peak_memory_stats before timing)"

|

||||

)

|

||||

|

||||

print(f"\nResults saved to: {log_file}")

|

||||

print("Benchmark completed successfully!")

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

main()

|

||||

@@ -108,8 +108,7 @@ def save_decoded_frames(

|

||||

|

||||

|

||||

def save_first_episode(imgs_dir: Path, dataset: LeRobotDataset) -> None:

|

||||

episode_index = 0

|

||||

ep_num_images = dataset.meta.episodes["length"][episode_index]

|

||||

ep_num_images = dataset.episode_data_index["to"][0].item()

|

||||

if imgs_dir.exists() and len(list(imgs_dir.glob("frame_*.png"))) == ep_num_images:

|

||||

return

|

||||

|

||||

@@ -266,8 +265,7 @@ def benchmark_encoding_decoding(

|

||||

overwrite=True,

|

||||

)

|

||||

|

||||

episode_index = 0

|

||||

ep_num_images = dataset.meta.episodes["length"][episode_index]

|

||||

ep_num_images = dataset.episode_data_index["to"][0].item()

|

||||

width, height = tuple(dataset[0][dataset.meta.camera_keys[0]].shape[-2:])

|

||||

num_pixels = width * height

|

||||

video_size_bytes = video_path.stat().st_size

|

||||

|

||||

@@ -39,7 +39,6 @@ RUN apt-get update && apt-get install -y --no-install-recommends \

|

||||

software-properties-common build-essential git curl \

|

||||

libglib2.0-0 libgl1-mesa-glx libegl1-mesa ffmpeg \

|

||||

libusb-1.0-0-dev speech-dispatcher libgeos-dev portaudio19-dev \

|

||||

cmake pkg-config ninja-build \

|

||||

&& add-apt-repository -y ppa:deadsnakes/ppa \

|

||||

&& apt-get update \

|

||||

&& apt-get install -y --no-install-recommends \

|

||||

|

||||

@@ -29,9 +29,8 @@ ENV DEBIAN_FRONTEND=noninteractive \

|

||||

|

||||

# Install system dependencies and uv (as root)

|

||||

RUN apt-get update && apt-get install -y --no-install-recommends \

|

||||

build-essential git curl libglib2.0-0 libegl1-mesa-dev ffmpeg \

|

||||

build-essential git curl libglib2.0-0 libegl1-mesa ffmpeg \

|

||||

libusb-1.0-0-dev speech-dispatcher libgeos-dev portaudio19-dev \

|

||||

cmake pkg-config ninja-build \

|

||||

&& curl -LsSf https://astral.sh/uv/install.sh | sh \

|

||||

&& mv /root/.local/bin/uv /usr/local/bin/uv \

|

||||

&& useradd --create-home --shell /bin/bash user_lerobot \

|

||||

|

||||

@@ -20,24 +20,14 @@

|

||||

- local: async

|

||||

title: Use Async Inference

|

||||

title: "Tutorials"

|

||||

- sections:

|

||||

- local: lerobot-dataset-v3

|

||||

title: Using LeRobotDataset

|

||||

- local: porting_datasets_v3

|

||||

title: Porting Large Datasets

|

||||

title: "Datasets"

|

||||

- sections:

|

||||

- local: smolvla

|

||||

title: Finetune SmolVLA

|

||||

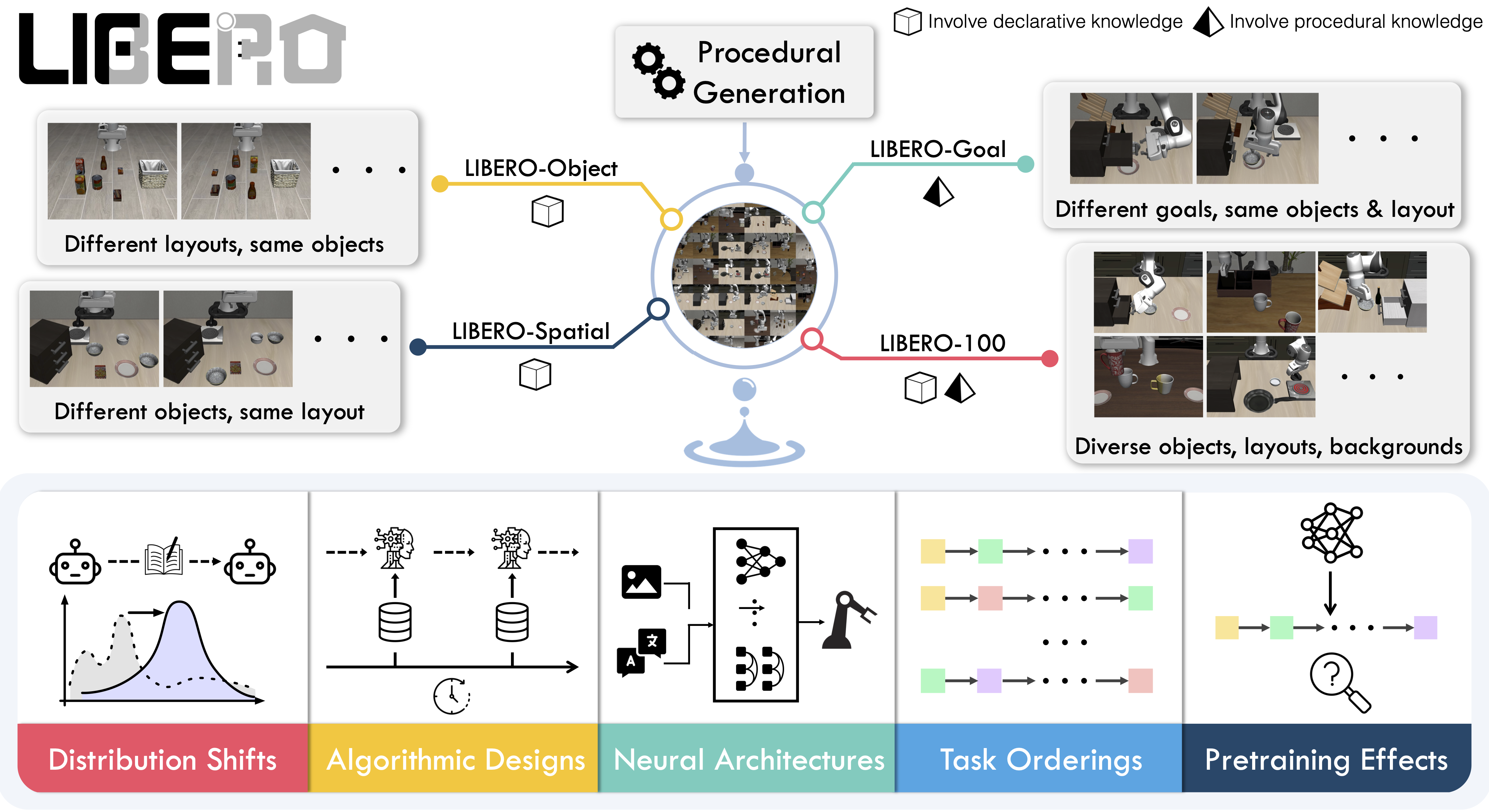

- local: libero

|

||||

title: Using Libero

|

||||

title: "Policies"

|

||||

|

||||

- sections:

|

||||

- local: introduction_processors

|

||||

title: Introduction to Robot Processors

|

||||

- local: debug_processor_pipeline

|

||||

title: Debug your processor pipeline

|

||||

- local: implement_your_own_processor

|

||||

title: Implement your own processor

|

||||

- local: processors_robots_teleop

|

||||

@@ -54,8 +44,6 @@

|

||||

title: LeKiwi

|

||||

- local: hope_jr

|

||||

title: Hope Jr

|

||||

- local: reachy2

|

||||

title: Reachy 2

|

||||

title: "Robots"

|

||||

- sections:

|

||||

- local: phone_teleop

|

||||

@@ -64,8 +52,6 @@

|

||||

- sections:

|

||||

- local: notebooks

|

||||

title: Notebooks

|

||||

- local: feetech

|

||||

title: Updating Feetech Firmware

|

||||

title: "Resources"

|

||||

- sections:

|

||||

- local: contributing

|

||||

|

||||

@@ -1,61 +1,5 @@

|

||||

# Backward compatibility

|

||||

|

||||

## Policy Normalization Migration (PR #1452)

|

||||

|

||||

**Breaking Change**: LeRobot policies no longer have built-in normalization layers embedded in their weights. Normalization is now handled by external `PolicyProcessorPipeline` components.

|

||||

|

||||

### What changed?

|

||||

|

||||

| | Before PR #1452 | After PR #1452 |

|

||||

| -------------------------- | ------------------------------------------------ | ------------------------------------------------------------ |

|

||||

| **Normalization Location** | Embedded in model weights (`normalize_inputs.*`) | External `PolicyProcessorPipeline` components |

|

||||

| **Model State Dict** | Contains normalization statistics | **Clean weights only** - no normalization parameters |

|

||||

| **Usage** | `policy(batch)` handles everything | `preprocessor(batch)` → `policy(...)` → `postprocessor(...)` |

|

||||

|

||||

### Impact on existing models

|

||||

|

||||

- Models trained **before** PR #1452 have normalization embedded in their weights

|

||||

- These models need migration to work with the new `PolicyProcessorPipeline` system

|

||||

- The migration extracts normalization statistics and creates separate processor pipelines

|

||||

|

||||

### Migrating old models

|

||||

|

||||

Use the migration script to convert models with embedded normalization:

|

||||

|

||||

```shell

|

||||

python src/lerobot/processor/migrate_policy_normalization.py \

|

||||

--pretrained-path lerobot/act_aloha_sim_transfer_cube_human \

|

||||

--push-to-hub \

|

||||

--branch migrated

|

||||

```

|

||||

|

||||

The script:

|

||||

|

||||

1. **Extracts** normalization statistics from model weights

|

||||

2. **Creates** external preprocessor and postprocessor pipelines

|

||||

3. **Removes** normalization layers from model weights

|

||||

4. **Saves** clean model + processor pipelines

|

||||

5. **Pushes** to Hub with automatic PR creation

|

||||

|

||||

### Using migrated models

|

||||

|

||||

```python

|

||||

# New usage pattern (after migration)

|

||||

from lerobot.policies.factory import make_policy, make_pre_post_processors

|

||||

|

||||

# Load model and processors separately

|

||||

policy = make_policy(config, ds_meta=dataset.meta)

|

||||

preprocessor, postprocessor = make_pre_post_processors(

|

||||

policy_cfg=config,

|

||||

dataset_stats=dataset.meta.stats

|

||||

)

|

||||

|

||||

# Process data through pipeline

|

||||

processed_batch = preprocessor(raw_batch)

|

||||

action = policy.select_action(processed_batch)

|

||||

final_action = postprocessor(action)

|

||||

```

|

||||

|

||||

## Hardware API redesign

|

||||

|

||||

PR [#777](https://github.com/huggingface/lerobot/pull/777) improves the LeRobot calibration but is **not backward-compatible**. Below is a overview of what changed and how you can continue to work with datasets created before this pull request.

|

||||

|

||||

@@ -9,7 +9,7 @@ To instantiate a camera, you need a camera identifier. This identifier might cha

|

||||

To find the camera indices of the cameras plugged into your system, run the following script:

|

||||

|

||||

```bash

|

||||

lerobot-find-cameras opencv # or realsense for Intel Realsense cameras

|

||||

python -m lerobot.find_cameras opencv # or realsense for Intel Realsense cameras

|

||||

```

|

||||

|

||||

The output will look something like this if you have two cameras connected:

|

||||

|

||||

@@ -1,299 +0,0 @@

|

||||

# Debug Your Processor Pipeline

|

||||

|

||||

Processor pipelines can be complex, especially when chaining multiple transformation steps.

|

||||

Unlike simple function calls, pipelines lack natural observability, you can't easily see what happens

|

||||

between each step or where things go wrong.

|

||||

This guide provides debugging tools and techniques specifically designed to address these challenges

|

||||

and help you understand data flow through your pipelines.

|

||||

|

||||

We'll explore three complementary debugging approaches: **hooks** for runtime monitoring, **step-through debugging** for detailed inspection, and **feature validation** for catching structural mismatches. Each serves a different purpose and together they provide complete visibility into your pipeline's behavior.

|

||||

|

||||

## Understanding Hooks

|

||||

|

||||

Hooks are functions that get called at specific points during pipeline execution.

|

||||

They provide a way to inspect, monitor, or modify data without changing your pipeline code.

|

||||

Think of them as "event listeners" for your pipeline.

|

||||

|

||||

### What is a Hook?

|

||||

|

||||

A hook is a callback function that gets automatically invoked at specific moments during pipeline execution.

|

||||

The concept comes from event-driven programming, imagine you could "hook into" the pipeline's execution flow to observe or react to what's happening.

|

||||

|

||||

Think of hooks like inserting checkpoints into your pipeline. Every time the pipeline reaches one of these checkpoints, it pauses briefly to call your hook function, giving you a chance to inspect the current state, log information, and validate data.

|

||||

|

||||

A hook is simply a function that accepts two parameters:

|

||||

|

||||

- `step_idx: int` - The index of the current processing step (0, 1, 2, etc.)

|

||||

- `transition: EnvTransition` - The data transition at that point in the pipeline

|

||||

|

||||

The beauty of hooks is their non-invasive nature: you can add monitoring, validation, or debugging logic without changing a single line of your pipeline code. The pipeline remains clean and focused on its core logic, while hooks handle the cross-cutting concerns like logging, monitoring, and debugging.

|

||||

|

||||

### Before vs After Hooks

|

||||

|

||||

The pipeline supports two types of hooks:

|

||||

|

||||

- **Before hooks** (`register_before_step_hook`) - Called before each step executes

|

||||

- **After hooks** (`register_after_step_hook`) - Called after each step completes

|

||||

|

||||

```python

|

||||

def before_hook(step_idx: int, transition: EnvTransition):

|

||||

"""Called before step processes the transition."""

|

||||

print(f"About to execute step {step_idx}")

|

||||

# Useful for: logging, validation, setup

|

||||

|

||||

def after_hook(step_idx: int, transition: EnvTransition):

|

||||

"""Called after step has processed the transition."""

|

||||

print(f"Completed step {step_idx}")

|

||||

# Useful for: monitoring results, cleanup, debugging

|

||||

|

||||

processor.register_before_step_hook(before_hook)

|

||||

processor.register_after_step_hook(after_hook)

|

||||

```

|

||||

|

||||

### Implementing a NaN Detection Hook

|

||||

|

||||

Here's a practical example of a hook that detects NaN values:

|

||||

|

||||

```python

|

||||

def check_nans(step_idx: int, transition: EnvTransition):

|

||||

"""Check for NaN values in observations."""

|

||||

obs = transition.get(TransitionKey.OBSERVATION)

|

||||

if obs:

|

||||

for key, value in obs.items():

|

||||

if isinstance(value, torch.Tensor) and torch.isnan(value).any():

|

||||

print(f"NaN detected in {key} at step {step_idx}")

|

||||

|

||||

# Register the hook to run after each step

|

||||

processor.register_after_step_hook(check_nans)

|

||||

|

||||

# Process your data - the hook will be called automatically

|

||||

output = processor(input_data)

|

||||

|

||||

# Remove the hook when done debugging

|

||||

processor.unregister_after_step_hook(check_nans)

|

||||

```

|

||||

|

||||

### How Hooks Work Internally

|

||||

|

||||

Understanding the internal mechanism helps you use hooks more effectively. The pipeline maintains two separate lists: one for before-step hooks and another for after-step hooks. When you register a hook, it's simply appended to the appropriate list.

|

||||

|

||||

During execution, the pipeline follows a strict sequence: for each processing step, it first calls all before-hooks in registration order, then executes the actual step transformation, and finally calls all after-hooks in registration order. This creates a predictable, sandwich-like structure around each step.

|

||||

|

||||

The key insight is that hooks don't change the core pipeline logic—they're purely additive. The pipeline's `_forward` method orchestrates this dance between hooks and processing steps, ensuring that your debugging or monitoring code runs at exactly the right moments without interfering with the main data flow.

|

||||

|

||||

Here's a simplified view of how the pipeline executes hooks:

|

||||

|

||||

```python

|

||||

class DataProcessorPipeline:

|

||||

def __init__(self):

|

||||

self.steps = [...]

|

||||

self.before_step_hooks = [] # List of before hooks

|

||||

self.after_step_hooks = [] # List of after hooks

|

||||

|

||||

def _forward(self, transition):

|

||||

"""Internal method that processes the transition through all steps."""

|

||||

for step_idx, processor_step in enumerate(self.steps):

|

||||

# 1. Call all BEFORE hooks

|

||||

for hook in self.before_step_hooks:

|

||||

hook(step_idx, transition)

|

||||

|

||||

# 2. Execute the actual processing step

|

||||

transition = processor_step(transition)

|

||||

|

||||

# 3. Call all AFTER hooks

|

||||

for hook in self.after_step_hooks:

|

||||

hook(step_idx, transition)

|

||||

|

||||

return transition

|

||||

|

||||

def register_before_step_hook(self, hook_fn):

|

||||

self.before_step_hooks.append(hook_fn)

|

||||

|

||||

def register_after_step_hook(self, hook_fn):

|

||||

self.after_step_hooks.append(hook_fn)

|

||||

```

|

||||

|

||||

### Execution Flow

|

||||

|

||||

The execution flow looks like this:

|

||||

|

||||

```

|

||||

Input → Before Hook → Step 0 → After Hook → Before Hook → Step 1 → After Hook → ... → Output

|

||||

```

|

||||

|

||||

For example, with 3 steps and both hook types:

|

||||

|

||||

```python

|

||||

def timing_before(step_idx, transition):

|

||||

print(f"⏱️ Starting step {step_idx}")

|

||||

|

||||

def validation_after(step_idx, transition):

|

||||

print(f"✅ Completed step {step_idx}")

|

||||

|

||||

processor.register_before_step_hook(timing_before)

|

||||

processor.register_after_step_hook(validation_after)

|

||||

|

||||

# This will output:

|

||||

# ⏱️ Starting step 0

|

||||

# ✅ Completed step 0

|

||||

# ⏱️ Starting step 1

|

||||

# ✅ Completed step 1

|

||||

# ⏱️ Starting step 2

|

||||

# ✅ Completed step 2

|

||||

```

|

||||

|

||||

### Multiple Hooks

|

||||

|

||||

You can register multiple hooks of the same type - they execute in the order registered:

|

||||

|

||||

```python

|

||||

def log_shapes(step_idx: int, transition: EnvTransition):

|

||||

obs = transition.get(TransitionKey.OBSERVATION)

|

||||

if obs:

|

||||

print(f"Step {step_idx} observation shapes:")

|

||||

for key, value in obs.items():

|

||||

if isinstance(value, torch.Tensor):

|

||||

print(f" {key}: {value.shape}")

|

||||

|

||||

processor.register_after_step_hook(check_nans) # Executes first

|

||||

processor.register_after_step_hook(log_shapes) # Executes second

|

||||

|

||||

# Both hooks will be called after each step in registration order

|

||||

output = processor(input_data)

|

||||

```

|

||||

|

||||

While hooks are excellent for monitoring specific issues (like NaN detection) or gathering metrics during normal pipeline execution, sometimes you need to dive deeper. When you want to understand exactly what happens at each step or debug complex transformation logic, step-through debugging provides the detailed inspection you need.

|

||||

|

||||

## Step-Through Debugging

|

||||

|

||||

Step-through debugging is like having a slow-motion replay for your pipeline. Instead of watching your data get transformed in one quick blur from input to output, you can pause and examine what happens after each individual step.

|

||||

|

||||

This approach is particularly valuable when you're trying to understand a complex pipeline, debug unexpected behavior, or verify that each transformation is working as expected. Unlike hooks, which are great for automated monitoring, step-through debugging gives you manual, interactive control over the inspection process.

|

||||

|

||||

The `step_through()` method is a generator that yields the transition state after each processing step, allowing you to inspect intermediate results. Think of it as creating a series of snapshots of your data as it flows through the pipeline—each snapshot shows you exactly what your data looks like after one more transformation has been applied.

|

||||

|

||||

### How Step-Through Works

|

||||

|

||||

The `step_through()` method fundamentally changes how the pipeline executes. Instead of running all steps in sequence and only returning the final result, it transforms the pipeline into an iterator that yields intermediate results.

|

||||

|

||||

Here's what happens internally: the method starts by converting your input data into the pipeline's internal transition format, then yields this initial state. Next, it applies the first processing step and yields the result. Then it applies the second step to that result and yields again, and so on. Each `yield` gives you a complete snapshot of the transition at that point.

|

||||

|

||||

This generator pattern is powerful because it's lazy—the pipeline only computes the next step when you ask for it. This means you can stop at any point, inspect the current state thoroughly, and decide whether to continue. You're not forced to run the entire pipeline just to debug one problematic step.

|

||||

|

||||

Instead of running the entire pipeline and only seeing the final result, `step_through()` pauses after each step and gives you the intermediate transition:

|

||||

|

||||

```python

|

||||

# This creates a generator that yields intermediate states

|

||||

for i, intermediate_result in enumerate(processor.step_through(input_data)):

|

||||

print(f"=== After step {i} ===")

|

||||

|

||||

# Inspect the observation at this stage

|

||||

obs = intermediate_result.get(TransitionKey.OBSERVATION)

|

||||

if obs:

|

||||

for key, value in obs.items():

|

||||

if isinstance(value, torch.Tensor):

|

||||

print(f"{key}: shape={value.shape}, dtype={value.dtype}")

|

||||

```

|

||||

|

||||

### Interactive Debugging with Breakpoints

|

||||

|

||||

You can add breakpoints in the step-through loop to interactively debug:

|

||||

|

||||

```python

|

||||

# Step through the pipeline with debugging

|

||||

for i, intermediate in enumerate(processor.step_through(data)):

|

||||

print(f"Step {i}: {processor.steps[i].__class__.__name__}")

|

||||

|

||||

# Set a breakpoint to inspect the current state

|

||||

breakpoint() # Debugger will pause here

|

||||

|

||||

# You can now inspect 'intermediate' in the debugger:

|

||||

# - Check tensor shapes and values

|

||||

# - Verify expected transformations

|

||||

# - Look for unexpected changes

|

||||

```

|

||||

|

||||

During the debugger session, you can:

|

||||

|

||||

- Examine `intermediate[TransitionKey.OBSERVATION]` to see observation data

|

||||

- Check `intermediate[TransitionKey.ACTION]` for action transformations

|

||||

- Inspect any part of the transition to understand what each step does

|

||||

|

||||

Step-through debugging is perfect for understanding the _data_ transformations, but what about the _structure_ of that data? While hooks and step-through help you debug runtime behavior, you also need to ensure your pipeline produces data in the format expected by downstream components. This is where feature contract validation comes in.

|

||||

|

||||

## Validating Feature Contracts

|

||||

|

||||

Feature contracts define what data structure your pipeline expects as input and produces as output.

|

||||

Validating these contracts helps catch mismatches early.

|

||||

|

||||

### Understanding Feature Contracts

|

||||

|

||||

Each processor step has a `transform_features()` method that describes how it changes the data structure:

|

||||

|

||||

```python

|

||||

# Get the expected output features from your pipeline

|

||||

initial_features = {

|

||||

PipelineFeatureType.OBSERVATION: {

|

||||

"observation.state": PolicyFeature(type=FeatureType.STATE, shape=(7,)),

|

||||

"observation.image": PolicyFeature(type=FeatureType.IMAGE, shape=(3, 224, 224))

|

||||

},

|

||||

PipelineFeatureType.ACTION: {

|

||||

"action": PolicyFeature(type=FeatureType.ACTION, shape=(4,))

|

||||

}

|

||||

}

|

||||

|

||||

# Check what your pipeline will output

|

||||

output_features = processor.transform_features(initial_features)

|

||||

|

||||

print("Input features:")

|

||||

for feature_type, features in initial_features.items():

|

||||

print(f" {feature_type}:")

|

||||

for key, feature in features.items():

|

||||

print(f" {key}: {feature.type.value}, shape={feature.shape}")

|

||||

|

||||

print("\nOutput features:")

|

||||

for feature_type, features in output_features.items():

|

||||

print(f" {feature_type}:")

|

||||

for key, feature in features.items():

|

||||