mirror of

https://github.com/huggingface/lerobot.git

synced 2026-05-11 14:49:43 +00:00

Compare commits

130 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 7788db7838 | |||

| d883c78a94 | |||

| de42da8225 | |||

| d0d714be47 | |||

| 7d9b469eee | |||

| 6db39cad58 | |||

| af0676f99e | |||

| b9df1a4ac5 | |||

| 5361346bec | |||

| f0b969ae48 | |||

| a9d54cbddb | |||

| c5a029a28a | |||

| c8163662ad | |||

| 376cc772ff | |||

| d1eefd4e97 | |||

| 7a03223693 | |||

| f840d2e006 | |||

| e94844fa59 | |||

| 990f8e9cc9 | |||

| 6ce2a00135 | |||

| bf90efa7e1 | |||

| 5b4ac3068e | |||

| dbe3406a69 | |||

| 1785767e61 | |||

| afd833f49e | |||

| 2234b851c0 | |||

| e4a214d890 | |||

| e8438aac59 | |||

| 8fe977118b | |||

| d09b2a28af | |||

| f2530570e0 | |||

| 8567ab60d8 | |||

| 9784123463 | |||

| 4c2add41d7 | |||

| a19d7fb6bf | |||

| 565c992589 | |||

| 96cc634a66 | |||

| b044f3104b | |||

| 384ec52ec7 | |||

| 8d1434c069 | |||

| f613a37cd2 | |||

| 494aa576b2 | |||

| 514625a7f6 | |||

| 9f7bfeb419 | |||

| aa40c8c813 | |||

| d36bdac114 | |||

| ff1666b216 | |||

| c57d3a9688 | |||

| 9ae11a087d | |||

| 21e63b505f | |||

| e9e7eb827a | |||

| ac323b0113 | |||

| b028907d21 | |||

| 2eafcc7ca1 | |||

| b3b57a8288 | |||

| eaaf1c1766 | |||

| 3bc3bf0391 | |||

| 8c5fe10d6c | |||

| 8178a06b90 | |||

| 9ea8bd029c | |||

| bd5c264c49 | |||

| 5c628f1700 | |||

| 9beafe0c19 | |||

| 27c9db60a6 | |||

| fda5fb5e94 | |||

| 5f5438d6fa | |||

| 2b779cd6c6 | |||

| 3886af42a5 | |||

| 38f7229078 | |||

| 504421949c | |||

| 28b9efc04f | |||

| abba423e28 | |||

| 47a81c4150 | |||

| 1ba896598e | |||

| 61e55830da | |||

| b7522da85d | |||

| 98dc053e6d | |||

| bbff93d20d | |||

| 32c1649085 | |||

| eb564f8ddb | |||

| a2958a8e0c | |||

| 8f1679f309 | |||

| b1473f11c8 | |||

| 7b556079d8 | |||

| e91a773b93 | |||

| a9bd67eae9 | |||

| 4a4ac759ec | |||

| 7dd8e015f8 | |||

| af2960c33e | |||

| a36e4619ad | |||

| b397a757bb | |||

| 92adf2218f | |||

| f3614dd812 | |||

| b23b7a5bd7 | |||

| 6ff5f318b2 | |||

| 2eae751977 | |||

| 894878039d | |||

| ab72471dda | |||

| 23849e0cb8 | |||

| cb18fc07ef | |||

| 440e22c184 | |||

| 28b69bf8ba | |||

| b997fdde96 | |||

| 6f975cf576 | |||

| 2688731064 | |||

| fe20437b62 | |||

| ff861ba869 | |||

| 4be3942cbc | |||

| fd5afdfbf0 | |||

| 8d2c66abd2 | |||

| afad90ffaa | |||

| f5091448a8 | |||

| cc46497f4c | |||

| 5d25f5bd40 | |||

| ce83752f16 | |||

| 4ed6cf159d | |||

| 7626d26e6a | |||

| 14a59f576b | |||

| eb3649292b | |||

| ac0993c2e3 | |||

| c20bf75ba0 | |||

| a25480d363 | |||

| 4c19a71d7c | |||

| d2684d41cd | |||

| 4e76c1f88c | |||

| 3bf0c19be7 | |||

| ad4f510262 | |||

| 9124b36b0a | |||

| 4bc356b7f3 | |||

| 21a961ecbb |

@@ -19,6 +19,8 @@

|

||||

title: Train RL in Simulation

|

||||

- local: async

|

||||

title: Use Async Inference

|

||||

- local: libero

|

||||

title: Using LIBERO

|

||||

title: "Tutorials"

|

||||

- sections:

|

||||

- local: smolvla

|

||||

|

||||

@@ -0,0 +1,230 @@

|

||||

# LIBERO

|

||||

|

||||

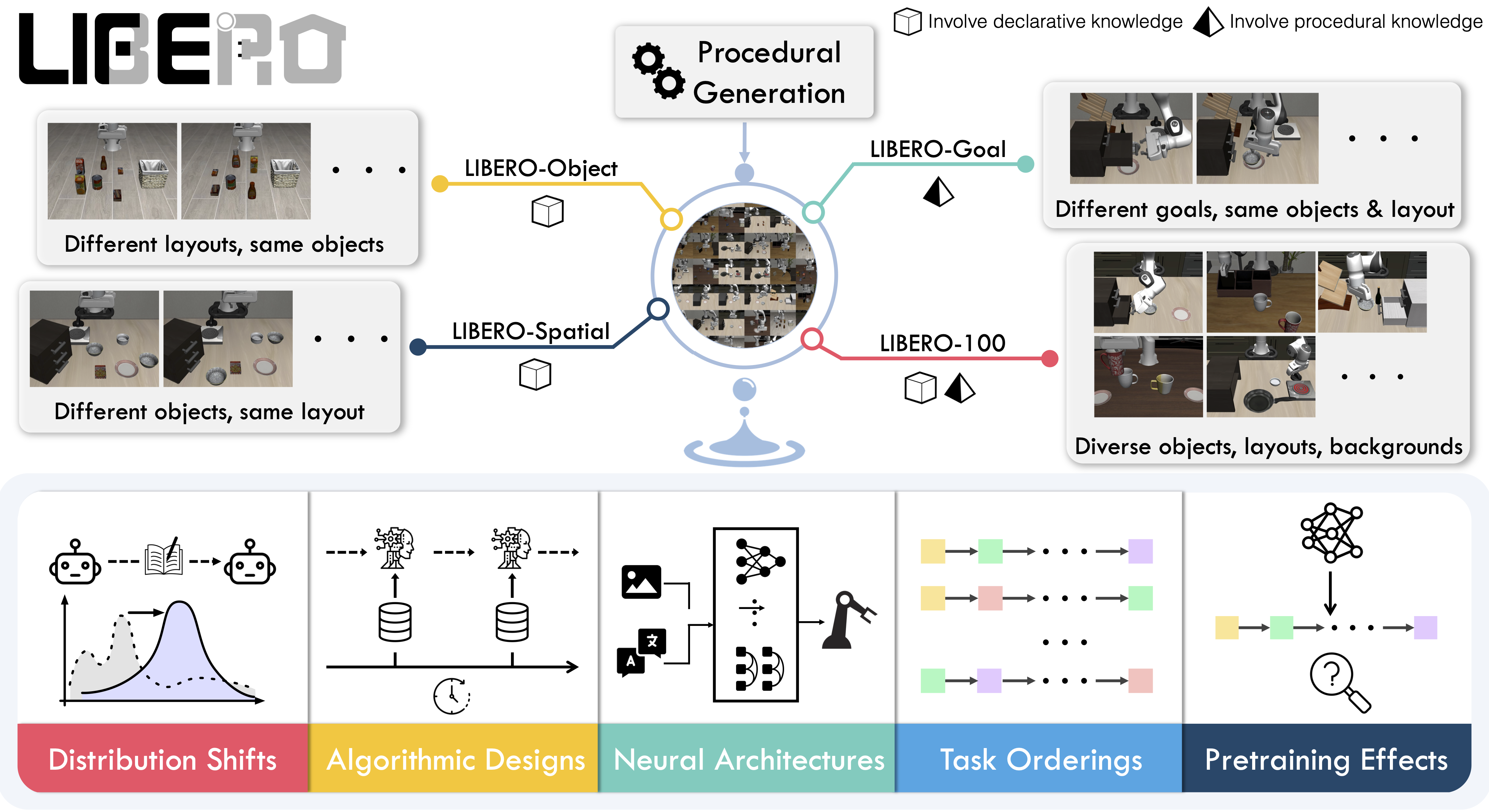

**LIBERO** is a benchmark designed to study **lifelong robot learning**. The idea is that robots won’t just be pretrained once in a factory, they’ll need to keep learning and adapting with their human users over time. This ongoing adaptation is called **lifelong learning in decision making (LLDM)**, and it’s a key step toward building robots that become truly personalized helpers. The benchmark was first introduced in the [LIBERO paper](https://arxiv.org/abs/2306.03310) and the [original repository](https://github.com/Lifelong-Robot-Learning/LIBERO).

|

||||

|

||||

To make progress on this challenge, LIBERO provides a set of standardized tasks that focus on **knowledge transfer**: how well a robot can apply what it has already learned to new situations. By evaluating on LIBERO, different algorithms can be compared fairly and researchers can build on each other’s work.

|

||||

|

||||

LIBERO includes **five task suites**:

|

||||

|

||||

- **LIBERO-Spatial (`libero_spatial`)** – tasks that require reasoning about spatial relations.

|

||||

- **LIBERO-Object (`libero_object`)** – tasks centered on manipulating different objects.

|

||||

- **LIBERO-Goal (`libero_goal`)** – goal-conditioned tasks where the robot must adapt to changing targets.

|

||||

- **LIBERO-90 (`libero_90`)** – 90 short-horizon tasks from the LIBERO-100 collection.

|

||||

- **LIBERO-Long (`libero_10`)** – 10 long-horizon tasks from the LIBERO-100 collection.

|

||||

|

||||

Together, these suites cover **130 tasks**, ranging from simple object manipulations to complex multi-step scenarios. LIBERO is meant to grow over time, and to serve as a shared benchmark where the community can test and improve lifelong learning algorithms.

|

||||

|

||||

|

||||

_Figure 1: An overview of the LIBERO benchmark._

|

||||

|

||||

## Evaluating with LIBERO

|

||||

|

||||

At **LeRobot**, we ported [LIBERO](https://github.com/Lifelong-Robot-Learning/LIBERO) into our framework and used it primarily to **benchmark [SmolVLA](https://huggingface.co/docs/lerobot/en/smolvla)**, our lightweight Vision-Language-Action model, comparing it against state-of-the-art VLA models such as Pi0, OpenVLA, Octo, and Diffusion Policy.

|

||||

|

||||

LIBERO is now part of our **multi-eval supported simulation**, allowing you to benchmark your policies either on a **single suite of tasks** or across **multiple suites at once** with just a single flag.

|

||||

|

||||

To install LIBERO, first follow the [LeRobot Installation Guide](https://huggingface.co/docs/lerobot/installation).

|

||||

Once LeRobot is installed, there are two options:

|

||||

|

||||

1. **Install via pip** (recommended):

|

||||

|

||||

```bash

|

||||

pip install "lerobot[libero,smolvla]"

|

||||

```

|

||||

|

||||

2. **Install from source**:

|

||||

```bash

|

||||

git clone https://github.com/huggingface/lerobot.git

|

||||

cd lerobot

|

||||

pip install -e ".[libero,smolvla]"

|

||||

```

|

||||

|

||||

### Single-suite evaluation

|

||||

|

||||

Evaluate a policy on one LIBERO suite:

|

||||

|

||||

```bash

|

||||

python src/lerobot/scripts/eval.py \

|

||||

--policy.path="your-policy-id" \

|

||||

--env.type=libero \

|

||||

--env.task=libero_object \

|

||||

--env.multitask_eval=False \

|

||||

--eval.batch_size=2 \

|

||||

--eval.n_episodes=3

|

||||

```

|

||||

|

||||

- `--env.task` picks the suite (`libero_object`, `libero_spatial`, etc.).

|

||||

- `--eval.batch_size` controls how many environments run in parallel.

|

||||

- `--eval.n_episodes` sets how many episodes to run in total.

|

||||

|

||||

---

|

||||

|

||||

### Multi-suite evaluation

|

||||

|

||||

Benchmark a policy across multiple suites at once:

|

||||

|

||||

```bash

|

||||

python src/lerobot/scripts/eval.py \

|

||||

--policy.path="your-policy-id" \

|

||||

--env.type=libero \

|

||||

--env.task=libero_object \

|

||||

--env.multitask_eval=True \

|

||||

--eval.batch_size=1 \

|

||||

--eval.n_episodes=2

|

||||

```

|

||||

|

||||

- Pass a comma-separated list to `--env.task` for multi-suite evaluation.

|

||||

- Set `-env.multitask_eval=True` to enable evaluation across all tasks in those suites.

|

||||

|

||||

### Policy inputs and outputs

|

||||

|

||||

When using LIBERO through LeRobot, policies interact with the environment via **observations** and **actions**:

|

||||

|

||||

- **Observations**

|

||||

- `observation.state` – proprioceptive features (agent state).

|

||||

- `observation.images.image` – main camera view (`agentview_image`).

|

||||

- `observation.images.image2` – wrist camera view (`robot0_eye_in_hand_image`).

|

||||

|

||||

⚠️ **Note:** LeRobot enforces the `.images.*` prefix for any visual features. Make sure your dataset metadata keys match this convention when evaluating.

|

||||

|

||||

## Input Features and Metadata Alignment

|

||||

|

||||

To train or evaluate a policy, you use `make_policy`, which builds a feature-naming dictionary for the observations the policy expects.

|

||||

This mapping can come from:

|

||||

- Dataset metadata

|

||||

- The evaluation environment

|

||||

- The policy path (if a pretrained repo ID is provided)

|

||||

|

||||

### Common Issues

|

||||

|

||||

A common problem is when the keys in the dataset, environment, and policy config do not match. For example:

|

||||

- `wrist_image` vs `observation.images.image2`

|

||||

- `observation.image2` (as in SmolVLA) vs the `.images.*` prefix convention

|

||||

|

||||

Such mismatches will cause `KeyError`s. This may be due to assumptions in `make_policy` or missing error handling.

|

||||

|

||||

***

|

||||

|

||||

### How to Check Expected Features

|

||||

- Open your policy config (`config.json`), e.g. [example here](https://huggingface.co/jadechoghari/smolvla-libero/blob/main/config.json).

|

||||

- Or add a breakpoint in `train.py` and inspect:

|

||||

|

||||

````python

|

||||

print(policy.config.input_features)

|

||||

To ensure you can just check what your policy expects as `input_features`:

|

||||

|

||||

- Open your policy config (`config.json`), e.g. [example here](https://huggingface.co/jadechoghari/smolvla-libero/blob/main/config.json).

|

||||

- Or add a breakpoint in `train.py` and inspect:

|

||||

```python

|

||||

print(policy.config.input_features)

|

||||

Fixing KeyErrors (Preprocessing Example)

|

||||

````

|

||||

|

||||

## Fixing KeyErrors (Preprocessing Example)

|

||||

|

||||

If your dataset columns do not follow the expected naming, you can rename them in-place before training:

|

||||

|

||||

````python

|

||||

import pyarrow.parquet as pq

|

||||

import shutil

|

||||

|

||||

def rename_columns(parquet_path, rename_map):

|

||||

table = pq.read_table(parquet_path)

|

||||

schema = table.schema

|

||||

new_names = [rename_map.get(name, name) for name in schema.names]

|

||||

renamed_table = table.rename_columns(new_names)

|

||||

backup_path = parquet_path + ".bak"

|

||||

shutil.copy(parquet_path, backup_path)

|

||||

pq.write_table(renamed_table, parquet_path)

|

||||

print(f"patched {parquet_path}, backup at {backup_path}")

|

||||

|

||||

# example mapping: align dataset keys to LeRobot convention

|

||||

rename_map = {

|

||||

"image": "observation.images.image",

|

||||

"wrist_image": "observation.images.image2",

|

||||

}

|

||||

|

||||

rename_columns("episode_000001.parquet", rename_map)

|

||||

|

||||

|

||||

|

||||

- **Actions**

|

||||

- Continuous control values in a `Box(-1, 1, shape=(7,))` space.

|

||||

|

||||

We also provide a notebook for quick testing:

|

||||

Training with LIBERO

|

||||

|

||||

## Training with LIBERO

|

||||

|

||||

When training on LIBERO tasks, make sure your dataset parquet and metadata keys follow the LeRobot convention.

|

||||

|

||||

The environment expects:

|

||||

|

||||

- `observation.state` → 8-dim agent state

|

||||

- `observation.images.image` → main camera (`agentview_image`)

|

||||

- `observation.images.image2` → wrist camera (`robot0_eye_in_hand_image`)

|

||||

|

||||

⚠️ Cleaning the dataset upfront is **cleaner and more efficient** than remapping keys inside the code. We plan to provide a script to easily preprocess such data.

|

||||

To avoid potential mismatches and `KeyError`s, we provide a **preprocessed LIBERO dataset** that is fully compatible with the current LeRobot codebase and requires no additional manipulations.

|

||||

|

||||

- 🔗 [Preprocessed LIBERO dataset (Hugging Face LeRobot org)](https://huggingface.co/datasets/HuggingFaceVLA/libero)

|

||||

- 🔗 [Original LIBERO dataset (physical-intelligence)](https://huggingface.co/datasets/physical-intelligence/libero)

|

||||

|

||||

The preprocessed dataset follows LeRobot naming conventions (e.g., `.images.*` prefix for visual features) and aligns with policy configs out-of-the-box.

|

||||

The original dataset is acknowledged here as the primary source.

|

||||

---

|

||||

|

||||

### Example training command

|

||||

|

||||

```bash

|

||||

python src/lerobot/scripts/train.py \

|

||||

--policy.type=smolvla \

|

||||

--policy.repo_id=${HF_USER}/libero-test \

|

||||

--dataset.repo_id=jadechoghari/smol-libero3 \

|

||||

--env.type=libero \

|

||||

--env.task=libero_10 \

|

||||

--output_dir=./outputs/ \

|

||||

--steps=100000 \

|

||||

--batch_size=4 \

|

||||

--env.multitask_eval=True \

|

||||

--eval.batch_size=1 \

|

||||

--eval.n_episodes=1 \

|

||||

--eval_freq=1000 \

|

||||

````

|

||||

|

||||

---

|

||||

|

||||

### Note on rendering

|

||||

|

||||

LeRobot uses MuJoCo for simulation. You need to set the rendering backend before training or evaluation:

|

||||

|

||||

- `export MUJOCO_GL=egl` → for headless servers (e.g. HPC, cloud)

|

||||

|

||||

---

|

||||

|

||||

## Colab Note on Parallel Evaluation

|

||||

|

||||

When running evaluation on Colab, you may encounter warnings such as:

|

||||

|

||||

```

|

||||

UserWarning: resource_tracker: There appear to be 1 leaked semaphore objects to clean up at shutdown

|

||||

```

|

||||

|

||||

This happens because Colab’s rendering contexts are **not thread-safe**, and `ThreadPoolExecutor(max_workers=num_workers)` can trigger segfaults or leaked semaphore warnings.

|

||||

|

||||

**Colab Note:**

|

||||

Parallel evaluation is not supported in Colab. To avoid these issues, run sequentially or disable multitask evaluation:

|

||||

|

||||

Run sequentially:

|

||||

|

||||

```bash

|

||||

--env.max_parallel_tasks=1

|

||||

```

|

||||

|

||||

Or disable multitask evaluation:

|

||||

|

||||

```bash

|

||||

--env.multitask_eval=False

|

||||

```

|

||||

|

||||

If you want to take advantage of **parallel evaluation**, we recommend **not using Colab**. Instead, run locally or on a proper compute environment where multi-threaded rendering is easily supported.

|

||||

@@ -0,0 +1,58 @@

|

||||

#!/bin/bash

|

||||

|

||||

# storage / caches

|

||||

RAID=/raid/jade

|

||||

export TRANSFORMERS_CACHE=$RAID/.cache/huggingface/transformers

|

||||

export HF_HOME=$RAID/.cache/huggingface

|

||||

export HF_DATASETS_CACHE=$RAID/.cache/huggingface/datasets

|

||||

export HF_LEROBOT_HOME=$RAID/.cache/huggingface/lerobot

|

||||

export WANDB_CACHE_DIR=$RAID/.cache/wandb

|

||||

export TMPDIR=$RAID/.cache/tmp

|

||||

mkdir -p $TMPDIR

|

||||

export WANDB_MODE=offline

|

||||

export HF_DATASETS_OFFLINE=1

|

||||

export HF_HUB_OFFLINE=1

|

||||

export TOKENIZERS_PARALLELISM=false

|

||||

export MUJOCO_GL=egl

|

||||

export CUDA_VISIBLE_DEVICES=2

|

||||

|

||||

# CONFIGURATION

|

||||

POLICY_PATH="/raid/jade/logs/lerobot/lerobot_2_HuggingFaceVLA_libero_smolvla_lr1e-4bs32steps100000/checkpoints/100000/pretrained_model"

|

||||

POLICY_PATH="/raid/jade/models/smolvlamust"

|

||||

TASK=libero_spatial,libero_object

|

||||

ENV_TYPE="libero"

|

||||

BATCH_SIZE=1

|

||||

N_EPISODES=1

|

||||

# storage / caches

|

||||

RAID=/raid/jade

|

||||

N_ACTION_STEPS=1

|

||||

export TRANSFORMERS_CACHE=$RAID/.cache/huggingface/transformers

|

||||

export HF_HOME=$RAID/.cache/huggingface

|

||||

export HF_DATASETS_CACHE=$RAID/.cache/huggingface/datasets

|

||||

export HF_LEROBOT_HOME=$RAID/.cache/huggingface/lerobot

|

||||

export WANDB_CACHE_DIR=$RAID/.cache/wandb

|

||||

export TMPDIR=$RAID/.cache/tmp

|

||||

mkdir -p $TMPDIR

|

||||

export WANDB_MODE=offline

|

||||

# export HF_DATASETS_OFFLINE=1

|

||||

# export HF_HUB_OFFLINE=1

|

||||

export TOKENIZERS_PARALLELISM=false

|

||||

export MUJOCO_GL=egl

|

||||

export MUJOCO_GL=egl

|

||||

unset HF_HUB_OFFLINE

|

||||

# RUN EVALUATION

|

||||

python src/lerobot/scripts/eval.py \

|

||||

--policy.path="$POLICY_PATH" \

|

||||

--env.type="$ENV_TYPE" \

|

||||

--eval.batch_size="$BATCH_SIZE" \

|

||||

--eval.n_episodes="$N_EPISODES" \

|

||||

--env.multitask_eval=True \

|

||||

--env.task=$TASK \

|

||||

# python examples/evaluate_libero.py \

|

||||

# --policy_path "$POLICY_PATH" \

|

||||

# --task_suite_name "$TASK" \

|

||||

# --num_steps_wait 10 \

|

||||

# --num_trials_per_task 10 \

|

||||

# --video_out_path "data/libero/videos" \

|

||||

# --device "cuda" \

|

||||

# --seed 7

|

||||

@@ -0,0 +1,193 @@

|

||||

#!/usr/bin/env python

|

||||

|

||||

"""Script to create and push a PI0OpenPI model to HuggingFace hub with proper config format."""

|

||||

|

||||

import tempfile

|

||||

from pathlib import Path

|

||||

|

||||

import torch

|

||||

from huggingface_hub import HfApi, create_repo

|

||||

|

||||

from lerobot.policies.pi0_openpi import PI0OpenPIConfig, PI0OpenPIPolicy

|

||||

|

||||

|

||||

def create_and_push_model(

|

||||

repo_id: str,

|

||||

private: bool = False,

|

||||

token: str = None,

|

||||

):

|

||||

"""Create a PI0OpenPI model with proper config and push to HuggingFace hub.

|

||||

|

||||

Args:

|

||||

repo_id: HuggingFace repository ID (e.g., "username/model-name")

|

||||

private: Whether to create a private repository

|

||||

token: HuggingFace API token (optional, will use cached token if not provided)

|

||||

"""

|

||||

print("=" * 60)

|

||||

print("PI0OpenPI Model Hub Upload")

|

||||

print("=" * 60)

|

||||

|

||||

# Create configuration

|

||||

print("\nCreating PI0OpenPI configuration...")

|

||||

config = PI0OpenPIConfig(

|

||||

# Model architecture

|

||||

paligemma_variant="gemma_2b",

|

||||

action_expert_variant="gemma_300m",

|

||||

pi05=False, # Use PI0 (not PI0.5)

|

||||

dtype="float32", # Use float32 for compatibility

|

||||

# Input/output dimensions

|

||||

action_dim=32, # see openpi `Pi0Config`

|

||||

state_dim=32,

|

||||

chunk_size=50,

|

||||

n_action_steps=50,

|

||||

# Image inputs, see openpi `model.py, IMAGE_KEYS`

|

||||

image_keys=(

|

||||

"observation.images.base_0_rgb",

|

||||

"observation.images.left_wrist_0_rgb",

|

||||

"observation.images.right_wrist_0_rgb",

|

||||

),

|

||||

# Training settings

|

||||

gradient_checkpointing=False,

|

||||

compile_model=False,

|

||||

device=None, # Auto-detect

|

||||

# Tokenizer settings

|

||||

tokenizer_max_length=48, # see openpi `__post_init__`, use pi0=48 and pi05=200

|

||||

)

|

||||

|

||||

print(f" - Config type: {config.__class__.__name__}")

|

||||

print(f" - PaliGemma variant: {config.paligemma_variant}")

|

||||

print(f" - Action expert variant: {config.action_expert_variant}")

|

||||

print(f" - Action dim: {config.action_dim}")

|

||||

print(f" - State dim: {config.state_dim}")

|

||||

|

||||

# Create dummy dataset stats for normalization

|

||||

print("\nCreating dataset statistics...")

|

||||

dataset_stats = {

|

||||

"observation.state": {

|

||||

"mean": torch.zeros(config.state_dim),

|

||||

"std": torch.ones(config.state_dim),

|

||||

"min": torch.full((config.state_dim,), -5.0),

|

||||

"max": torch.full((config.state_dim,), 5.0),

|

||||

},

|

||||

"action": {

|

||||

"mean": torch.zeros(config.action_dim),

|

||||

"std": torch.ones(config.action_dim),

|

||||

"min": torch.full((config.action_dim,), -1.0),

|

||||

"max": torch.full((config.action_dim,), 1.0),

|

||||

},

|

||||

}

|

||||

|

||||

# Add image stats

|

||||

for key in config.image_keys:

|

||||

dataset_stats[key] = {

|

||||

"mean": torch.tensor([0.485, 0.456, 0.406]), # TODO(pepijn): fix this, now its ImageNet mean

|

||||

"std": torch.tensor([0.229, 0.224, 0.225]), # TODO(pepijn): fix this, now its ImageNet std

|

||||

"min": torch.tensor([0.0, 0.0, 0.0]),

|

||||

"max": torch.tensor([1.0, 1.0, 1.0]),

|

||||

}

|

||||

|

||||

# Create the policy

|

||||

print("\nInitializing PI0OpenPI policy...")

|

||||

print(" (This may take a moment as it loads the tokenizer and initializes the model)")

|

||||

policy = PI0OpenPIPolicy(config, dataset_stats)

|

||||

|

||||

# Initialize with small random weights (optional - for testing)

|

||||

# Note: In practice, you would load your trained weights here

|

||||

print("\nInitializing model weights...")

|

||||

for name, param in policy.named_parameters():

|

||||

if "weight" in name:

|

||||

if "norm" in name.lower() or "layernorm" in name.lower():

|

||||

torch.nn.init.ones_(param)

|

||||

elif len(param.shape) >= 2:

|

||||

torch.nn.init.xavier_uniform_(param, gain=0.01)

|

||||

else:

|

||||

torch.nn.init.normal_(param, mean=0.0, std=0.01)

|

||||

elif "bias" in name:

|

||||

torch.nn.init.zeros_(param)

|

||||

|

||||

print(f" - Total parameters: {sum(p.numel() for p in policy.parameters()):,}")

|

||||

print(f" - Trainable parameters: {sum(p.numel() for p in policy.parameters() if p.requires_grad):,}")

|

||||

|

||||

# Create temporary directory for saving

|

||||

with tempfile.TemporaryDirectory() as tmpdir:

|

||||

save_path = Path(tmpdir) / "model"

|

||||

save_path.mkdir(exist_ok=True)

|

||||

|

||||

print(f"\nSaving model to temporary directory: {save_path}")

|

||||

|

||||

# Save the model using LeRobot's save_pretrained method

|

||||

# This ensures the config is saved in the correct format

|

||||

policy.save_pretrained(save_path)

|

||||

|

||||

# List saved files

|

||||

saved_files = list(save_path.glob("*"))

|

||||

print("\nSaved files:")

|

||||

for file in saved_files:

|

||||

size = file.stat().st_size

|

||||

print(f" - {file.name}: {size:,} bytes")

|

||||

|

||||

# Create or get repository

|

||||

print(f"\nCreating/accessing repository: {repo_id}")

|

||||

api = HfApi(token=token)

|

||||

|

||||

try:

|

||||

# Create repo if it doesn't exist

|

||||

create_repo(

|

||||

repo_id,

|

||||

private=private,

|

||||

token=token,

|

||||

exist_ok=True,

|

||||

)

|

||||

print(f" ✓ Repository ready: https://huggingface.co/{repo_id}")

|

||||

except Exception as e:

|

||||

print(f" ⚠️ Note: {e}")

|

||||

|

||||

# Upload to hub

|

||||

print("\nUploading to HuggingFace hub...")

|

||||

api.upload_folder(

|

||||

folder_path=str(save_path),

|

||||

repo_id=repo_id,

|

||||

repo_type="model",

|

||||

token=token,

|

||||

commit_message="Upload PI0OpenPI model with proper LeRobot config format",

|

||||

)

|

||||

|

||||

print(f"\n✓ Model successfully uploaded to: https://huggingface.co/{repo_id}")

|

||||

|

||||

print("\n" + "=" * 60)

|

||||

print("✓ Process complete!")

|

||||

print("=" * 60)

|

||||

|

||||

return policy

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

import argparse

|

||||

|

||||

parser = argparse.ArgumentParser(description="Push PI0OpenPI model to HuggingFace hub")

|

||||

parser.add_argument(

|

||||

"--repo-id",

|

||||

type=str,

|

||||

default="test-user/pi0-openpi-test",

|

||||

help="HuggingFace repository ID (e.g., 'username/model-name')",

|

||||

)

|

||||

parser.add_argument(

|

||||

"--private",

|

||||

action="store_true",

|

||||

help="Create a private repository",

|

||||

)

|

||||

parser.add_argument(

|

||||

"--token",

|

||||

type=str,

|

||||

default=None,

|

||||

help="HuggingFace API token (optional, uses cached token if not provided)",

|

||||

)

|

||||

|

||||

args = parser.parse_args()

|

||||

|

||||

# Run the upload

|

||||

create_and_push_model(

|

||||

repo_id=args.repo_id,

|

||||

private=args.private,

|

||||

token=args.token,

|

||||

)

|

||||

+26

-6

@@ -29,7 +29,7 @@ version = "0.3.4"

|

||||

description = "🤗 LeRobot: State-of-the-art Machine Learning for Real-World Robotics in Pytorch"

|

||||

readme = "README.md"

|

||||

license = { text = "Apache-2.0" }

|

||||

requires-python = ">=3.10"

|

||||

requires-python = ">=3.11"

|

||||

authors = [

|

||||

{ name = "Rémi Cadène", email = "re.cadene@gmail.com" },

|

||||

{ name = "Simon Alibert", email = "alibert.sim@gmail.com" },

|

||||

@@ -50,7 +50,7 @@ classifiers = [

|

||||

"Intended Audience :: Education",

|

||||

"Intended Audience :: Science/Research",

|

||||

"License :: OSI Approved :: Apache Software License",

|

||||

"Programming Language :: Python :: 3.10",

|

||||

"Programming Language :: Python :: 3.11",

|

||||

"Topic :: Software Development :: Build Tools",

|

||||

"Topic :: Scientific/Engineering :: Artificial Intelligence",

|

||||

]

|

||||

@@ -95,7 +95,7 @@ dependencies = [

|

||||

# Common

|

||||

pygame-dep = ["pygame>=2.5.1"]

|

||||

placo-dep = ["placo>=0.9.6"]

|

||||

transformers-dep = ["transformers>=4.50.3,<4.52.0"] # TODO: Bumb dependency

|

||||

transformers-dep = ["transformers==4.53.2"]

|

||||

grpcio-dep = ["grpcio==1.73.1", "protobuf==6.31.0"]

|

||||

|

||||

# Motors

|

||||

@@ -135,7 +135,26 @@ video_benchmark = ["scikit-image>=0.23.2", "pandas>=2.2.2"]

|

||||

aloha = ["gym-aloha>=0.1.1"]

|

||||

pusht = ["gym-pusht>=0.1.5", "pymunk>=6.6.0,<7.0.0"] # TODO: Fix pymunk version in gym-pusht instead

|

||||

xarm = ["gym-xarm>=0.1.1"]

|

||||

|

||||

libero = [

|

||||

"hydra-core>=1.2,<1.4",

|

||||

"numpy",

|

||||

"wandb",

|

||||

"easydict",

|

||||

"transformers",

|

||||

"opencv-python",

|

||||

"robomimic==0.2.0",

|

||||

"einops",

|

||||

"thop",

|

||||

"robosuite==1.4.0",

|

||||

"mujoco>=2.3.7,<3.0.0",

|

||||

"bddl==1.0.1",

|

||||

"matplotlib",

|

||||

"cloudpickle",

|

||||

"future",

|

||||

"gym",

|

||||

"egl_probe @ git+https://github.com/jadechoghari/egl_probe.git#egg=egl_probe",

|

||||

"libero @ git+https://github.com/jadechoghari/LIBERO.git@main#egg=libero",

|

||||

]

|

||||

# All

|

||||

all = [

|

||||

"lerobot[dynamixel]",

|

||||

@@ -154,7 +173,8 @@ all = [

|

||||

"lerobot[video_benchmark]",

|

||||

"lerobot[aloha]",

|

||||

"lerobot[pusht]",

|

||||

"lerobot[xarm]"

|

||||

"lerobot[xarm]",

|

||||

"lerobot[libero]"

|

||||

]

|

||||

|

||||

[project.scripts]

|

||||

@@ -260,7 +280,7 @@ default.extend-ignore-identifiers-re = [

|

||||

# paths = ["src/lerobot"]

|

||||

|

||||

# [tool.mypy]

|

||||

# python_version = "3.10"

|

||||

# python_version = "3.11"

|

||||

# warn_return_any = true

|

||||

# warn_unused_configs = true

|

||||

# ignore_missing_imports = false

|

||||

|

||||

@@ -72,9 +72,11 @@ class PreTrainedConfig(draccus.ChoiceRegistry, HubMixin, abc.ABC):

|

||||

tags: list[str] | None = None

|

||||

# Add tags to your policy on the hub.

|

||||

license: str | None = None

|

||||

# Either the repo ID of a model hosted on the Hub or a path to a directory containing weights

|

||||

# saved using `Policy.save_pretrained`. If not provided, the policy is initialized from scratch.

|

||||

pretrained_path: str | None = None

|

||||

|

||||

def __post_init__(self):

|

||||

self.pretrained_path = None

|

||||

if not self.device or not is_torch_device_available(self.device):

|

||||

auto_device = auto_select_torch_device()

|

||||

logging.warning(f"Device '{self.device}' is not available. Switching to '{auto_device}'.")

|

||||

|

||||

@@ -30,6 +30,8 @@ class EnvConfig(draccus.ChoiceRegistry, abc.ABC):

|

||||

fps: int = 30

|

||||

features: dict[str, PolicyFeature] = field(default_factory=dict)

|

||||

features_map: dict[str, str] = field(default_factory=dict)

|

||||

multitask_eval: bool = False

|

||||

max_parallel_tasks: int = 5

|

||||

|

||||

@property

|

||||

def type(self) -> str:

|

||||

@@ -271,3 +273,53 @@ class HILEnvConfig(EnvConfig):

|

||||

"use_gamepad": self.use_gamepad,

|

||||

"gripper_penalty": self.gripper_penalty,

|

||||

}

|

||||

|

||||

|

||||

@EnvConfig.register_subclass("libero")

|

||||

@dataclass

|

||||

class LiberoEnv(EnvConfig):

|

||||

task: str = "libero_10" # can also choose libero_spatial, libero_object, etc.

|

||||

fps: int = 30

|

||||

episode_length: int = 520

|

||||

obs_type: str = "pixels_agent_pos"

|

||||

render_mode: str = "rgb_array"

|

||||

camera_name: str = "agentview_image,robot0_eye_in_hand_image"

|

||||

init_states: bool = True

|

||||

multitask_eval: bool = True

|

||||

features: dict[str, PolicyFeature] = field(

|

||||

default_factory=lambda: {

|

||||

"action": PolicyFeature(type=FeatureType.ACTION, shape=(7,)),

|

||||

}

|

||||

)

|

||||

features_map: dict[str, str] = field(

|

||||

default_factory=lambda: {

|

||||

"action": ACTION,

|

||||

"agent_pos": OBS_STATE,

|

||||

"pixels/agentview_image": f"{OBS_IMAGES}.image",

|

||||

"pixels/robot0_eye_in_hand_image": f"{OBS_IMAGES}.image2",

|

||||

}

|

||||

)

|

||||

|

||||

def __post_init__(self):

|

||||

if self.obs_type == "pixels":

|

||||

self.features["pixels/agentview_image"] = PolicyFeature(

|

||||

type=FeatureType.VISUAL, shape=(360, 360, 3)

|

||||

)

|

||||

self.features["pixels/robot0_eye_in_hand_image"] = PolicyFeature(

|

||||

type=FeatureType.VISUAL, shape=(360, 360, 3)

|

||||

)

|

||||

elif self.obs_type == "pixels_agent_pos":

|

||||

self.features["agent_pos"] = PolicyFeature(type=FeatureType.STATE, shape=(8,))

|

||||

self.features["pixels/agentview_image"] = PolicyFeature(

|

||||

type=FeatureType.VISUAL, shape=(360, 360, 3)

|

||||

)

|

||||

self.features["pixels/robot0_eye_in_hand_image"] = PolicyFeature(

|

||||

type=FeatureType.VISUAL, shape=(360, 360, 3)

|

||||

)

|

||||

|

||||

@property

|

||||

def gym_kwargs(self) -> dict:

|

||||

return {

|

||||

"obs_type": self.obs_type,

|

||||

"render_mode": self.render_mode,

|

||||

}

|

||||

|

||||

+35

-12

@@ -17,7 +17,7 @@ import importlib

|

||||

|

||||

import gymnasium as gym

|

||||

|

||||

from lerobot.envs.configs import AlohaEnv, EnvConfig, HILEnvConfig, PushtEnv, XarmEnv

|

||||

from lerobot.envs.configs import AlohaEnv, EnvConfig, HILEnvConfig, LiberoEnv, PushtEnv, XarmEnv

|

||||

|

||||

|

||||

def make_env_config(env_type: str, **kwargs) -> EnvConfig:

|

||||

@@ -29,11 +29,15 @@ def make_env_config(env_type: str, **kwargs) -> EnvConfig:

|

||||

return XarmEnv(**kwargs)

|

||||

elif env_type == "hil":

|

||||

return HILEnvConfig(**kwargs)

|

||||

elif env_type == "libero":

|

||||

return LiberoEnv(**kwargs)

|

||||

else:

|

||||

raise ValueError(f"Policy type '{env_type}' is not available.")

|

||||

|

||||

|

||||

def make_env(cfg: EnvConfig, n_envs: int = 1, use_async_envs: bool = False) -> gym.vector.VectorEnv | None:

|

||||

def make_env(

|

||||

cfg: EnvConfig, n_envs: int = 1, use_async_envs: bool = False

|

||||

) -> gym.vector.VectorEnv | dict[str, dict[int, gym.vector.VectorEnv]]:

|

||||

"""Makes a gym vector environment according to the config.

|

||||

|

||||

Args:

|

||||

@@ -48,24 +52,43 @@ def make_env(cfg: EnvConfig, n_envs: int = 1, use_async_envs: bool = False) -> g

|

||||

|

||||

Returns:

|

||||

gym.vector.VectorEnv: The parallelized gym.env instance.

|

||||

dict[str, dict[int, gym.vector.VectorEnv]]: A mapping from task suite

|

||||

names to indexed vectorized environments (when multitask eval is used).

|

||||

|

||||

"""

|

||||

if n_envs < 1:

|

||||

raise ValueError("`n_envs must be at least 1")

|

||||

raise ValueError("`n_envs` must be at least 1")

|

||||

|

||||

env_cls = gym.vector.AsyncVectorEnv if use_async_envs else gym.vector.SyncVectorEnv

|

||||

|

||||

if "libero" in cfg.type:

|

||||

from lerobot.envs.libero import create_libero_envs

|

||||

|

||||

return create_libero_envs(

|

||||

task=cfg.task,

|

||||

n_envs=n_envs,

|

||||

camera_name=cfg.camera_name,

|

||||

init_states=cfg.init_states,

|

||||

gym_kwargs=cfg.gym_kwargs,

|

||||

env_cls=env_cls,

|

||||

multitask_eval=cfg.multitask_eval,

|

||||

)

|

||||

|

||||

package_name = f"gym_{cfg.type}"

|

||||

|

||||

try:

|

||||

importlib.import_module(package_name)

|

||||

except ModuleNotFoundError as e:

|

||||

print(f"{package_name} is not installed. Please install it with `pip install 'lerobot[{cfg.type}]'`")

|

||||

raise e

|

||||

raise ModuleNotFoundError(

|

||||

f'{package_name} is not installed. Install with: pip install "lerobot[{cfg.type}]"'

|

||||

) from e

|

||||

|

||||

gym_handle = f"{package_name}/{cfg.task}"

|

||||

|

||||

# batched version of the env that returns an observation of shape (b, c)

|

||||

env_cls = gym.vector.AsyncVectorEnv if use_async_envs else gym.vector.SyncVectorEnv

|

||||

env = env_cls(

|

||||

[lambda: gym.make(gym_handle, disable_env_checker=True, **cfg.gym_kwargs) for _ in range(n_envs)]

|

||||

)

|

||||

def _make_one():

|

||||

return gym.make(gym_handle, disable_env_checker=True, **(cfg.gym_kwargs or {}))

|

||||

|

||||

return env

|

||||

vec = env_cls([_make_one for _ in range(n_envs)])

|

||||

|

||||

# normalize to {suite: {task_id: vec_env}} for consistency

|

||||

suite_name = cfg.type # e.g., "pusht", "aloha"

|

||||

return {suite_name: {0: vec}}

|

||||

|

||||

@@ -0,0 +1,497 @@

|

||||

from __future__ import annotations

|

||||

|

||||

import logging

|

||||

import math

|

||||

import os

|

||||

from collections import defaultdict

|

||||

from collections.abc import Callable, Iterable, Mapping, Sequence

|

||||

from itertools import chain

|

||||

from typing import Any

|

||||

|

||||

import gymnasium as gym

|

||||

import numpy as np

|

||||

import torch

|

||||

from gymnasium import spaces

|

||||

from libero.libero import benchmark, get_libero_path

|

||||

from libero.libero.envs import OffScreenRenderEnv

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

# ---- Helpers -----------------------------------------------------------------

|

||||

|

||||

|

||||

def _parse_camera_names(camera_name: str | Sequence[str]) -> list[str]:

|

||||

"""Normalize camera_name into a non-empty list of strings."""

|

||||

if isinstance(camera_name, str):

|

||||

cams = [c.strip() for c in camera_name.split(",") if c.strip()]

|

||||

elif isinstance(camera_name, (list, tuple)):

|

||||

cams = [str(c).strip() for c in camera_name if str(c).strip()]

|

||||

else:

|

||||

raise TypeError(f"camera_name must be str or sequence[str], got {type(camera_name).__name__}")

|

||||

if not cams:

|

||||

raise ValueError("camera_name resolved to an empty list.")

|

||||

return cams

|

||||

|

||||

|

||||

def _get_suite(name: str):

|

||||

"""Instantiate a LIBERO suite by name with clear validation."""

|

||||

bench = benchmark.get_benchmark_dict()

|

||||

if name not in bench:

|

||||

raise ValueError(f"Unknown LIBERO suite '{name}'. Available: {', '.join(sorted(bench.keys()))}")

|

||||

suite = bench[name]()

|

||||

if not getattr(suite, "tasks", None):

|

||||

raise ValueError(f"Suite '{name}' has no tasks.")

|

||||

return suite

|

||||

|

||||

|

||||

def _select_task_ids(total_tasks: int, task_ids: Iterable[int] | None) -> list[int]:

|

||||

"""Validate/normalize task ids. If None → all tasks."""

|

||||

if task_ids is None:

|

||||

return list(range(total_tasks))

|

||||

ids = sorted({int(t) for t in task_ids})

|

||||

for t in ids:

|

||||

if t < 0 or t >= total_tasks:

|

||||

raise ValueError(f"task_id {t} out of range [0, {total_tasks - 1}].")

|

||||

return ids

|

||||

|

||||

|

||||

def _make_env_fns(

|

||||

*,

|

||||

suite,

|

||||

suite_name: str,

|

||||

task_id: int,

|

||||

n_envs: int,

|

||||

camera_names: list[str],

|

||||

init_states: bool,

|

||||

gym_kwargs: Mapping[str, Any],

|

||||

LiberoEnv: type, # injected to avoid forward ref issues if needed

|

||||

) -> list[Callable[[], LiberoEnv]]:

|

||||

"""Build n_envs factory callables for a single (suite, task_id)."""

|

||||

joined_cams = ",".join(camera_names) # keep backward-compat: downstream expects a string

|

||||

fns: list[Callable[[], LiberoEnv]] = []

|

||||

for i in range(n_envs):

|

||||

|

||||

def _mk(

|

||||

i=i,

|

||||

suite=suite,

|

||||

task_id=task_id,

|

||||

suite_name=suite_name,

|

||||

joined_cams=joined_cams,

|

||||

init_states=init_states,

|

||||

gym_kwargs=dict(gym_kwargs),

|

||||

):

|

||||

return LiberoEnv(

|

||||

task_suite=suite,

|

||||

task_id=task_id,

|

||||

task_suite_name=suite_name,

|

||||

camera_name=joined_cams,

|

||||

init_states=init_states,

|

||||

episode_index=i,

|

||||

**gym_kwargs,

|

||||

)

|

||||

|

||||

fns.append(_mk)

|

||||

return fns

|

||||

|

||||

|

||||

# ---- Main API ----------------------------------------------------------------

|

||||

|

||||

|

||||

def create_libero_envs(

|

||||

task: str,

|

||||

n_envs: int,

|

||||

gym_kwargs: dict[str, Any] | None = None,

|

||||

camera_name: str | Sequence[str] = "agentview_image,robot0_eye_in_hand_image",

|

||||

init_states: bool = True,

|

||||

env_cls: Callable[[Sequence[Callable[[], Any]]], Any] | None = None,

|

||||

multitask_eval: bool = True, # kept for signature compatibility; return type is consistent regardless

|

||||

) -> dict[str, dict[int, Any]]:

|

||||

"""

|

||||

Create vectorized LIBERO environments with a consistent return shape.

|

||||

|

||||

Returns:

|

||||

dict[suite_name][task_id] -> vec_env (env_cls([...]) with exactly n_envs factories)

|

||||

Notes:

|

||||

- n_envs is the number of rollouts *per task* (episode_index = 0..n_envs-1).

|

||||

- `task` can be a single suite or a comma-separated list of suites.

|

||||

- You may pass `task_ids` (list[int]) inside `gym_kwargs` to restrict tasks per suite.

|

||||

"""

|

||||

if env_cls is None or not callable(env_cls):

|

||||

raise ValueError("env_cls must be a callable that wraps a list of environment factory callables.")

|

||||

if not isinstance(n_envs, int) or n_envs <= 0:

|

||||

raise ValueError(f"n_envs must be a positive int; got {n_envs}.")

|

||||

|

||||

gym_kwargs = dict(gym_kwargs or {})

|

||||

task_ids_filter = gym_kwargs.pop("task_ids", None) # optional: limit to specific tasks

|

||||

|

||||

# Avoid circular import/type issues: assume LiberoEnv is defined in this module

|

||||

try:

|

||||

LiberoEnv # type: ignore[name-defined]

|

||||

except NameError:

|

||||

# If LiberoEnv is in the same file, this won't run. If it's elsewhere, import here.

|

||||

exit()

|

||||

# from .libero_env import LiberoEnv # adjust if your class lives in another module

|

||||

|

||||

camera_names = _parse_camera_names(camera_name)

|

||||

suite_names = [s.strip() for s in str(task).split(",") if s.strip()]

|

||||

if not suite_names:

|

||||

raise ValueError("`task` must contain at least one LIBERO suite name.")

|

||||

|

||||

logger.info(

|

||||

"Creating LIBERO envs | suites=%s | n_envs(per task)=%d | init_states=%s | multitask_eval=%s",

|

||||

suite_names,

|

||||

n_envs,

|

||||

init_states,

|

||||

bool(multitask_eval),

|

||||

)

|

||||

if task_ids_filter is not None:

|

||||

logger.info("Restricting to task_ids=%s", task_ids_filter)

|

||||

|

||||

out: dict[str, dict[int, Any]] = defaultdict(dict)

|

||||

|

||||

for suite_name in suite_names:

|

||||

suite = _get_suite(suite_name)

|

||||

total = len(suite.tasks)

|

||||

selected = _select_task_ids(total, task_ids_filter)

|

||||

|

||||

if not selected:

|

||||

raise ValueError(f"No tasks selected for suite '{suite_name}' (available: {total}).")

|

||||

|

||||

for tid in selected:

|

||||

fns = _make_env_fns(

|

||||

suite=suite,

|

||||

suite_name=suite_name,

|

||||

task_id=tid,

|

||||

n_envs=n_envs,

|

||||

camera_names=camera_names,

|

||||

init_states=init_states,

|

||||

gym_kwargs=gym_kwargs,

|

||||

LiberoEnv=LiberoEnv,

|

||||

)

|

||||

out[suite_name][tid] = env_cls(fns)

|

||||

logger.debug("Built vec env | suite=%s | task_id=%d | n_envs=%d", suite_name, tid, n_envs)

|

||||

|

||||

# return plain dicts for predictability

|

||||

return {suite: dict(task_map) for suite, task_map in out.items()}

|

||||

|

||||

|

||||

def quat2axisangle(quat):

|

||||

"""

|

||||

Copied from robosuite: https://github.com/ARISE-Initiative/robosuite/blob/eafb81f54ffc104f905ee48a16bb15f059176ad3/robosuite/utils/transform_utils.py#L490C1-L512C55

|

||||

|

||||

Converts quaternion to axis-angle format.

|

||||

Returns a unit vector direction scaled by its angle in radians.

|

||||

|

||||

Args:

|

||||

quat (np.array): (x,y,z,w) vec4 float angles

|

||||

|

||||

Returns:

|

||||

np.array: (ax,ay,az) axis-angle exponential coordinates

|

||||

"""

|

||||

# clip quaternion

|

||||

if quat[3] > 1.0:

|

||||

quat[3] = 1.0

|

||||

elif quat[3] < -1.0:

|

||||

quat[3] = -1.0

|

||||

|

||||

den = np.sqrt(1.0 - quat[3] * quat[3])

|

||||

if math.isclose(den, 0.0):

|

||||

# This is (close to) a zero degree rotation, immediately return

|

||||

return np.zeros(3)

|

||||

|

||||

return (quat[:3] * 2.0 * math.acos(quat[3])) / den

|

||||

|

||||

|

||||

def get_task_init_states(task_suite, i):

|

||||

init_states_path = os.path.join(

|

||||

get_libero_path("init_states"),

|

||||

task_suite.tasks[i].problem_folder,

|

||||

task_suite.tasks[i].init_states_file,

|

||||

)

|

||||

init_states = torch.load(init_states_path, weights_only=False) # nosec B614

|

||||

return init_states

|

||||

|

||||

|

||||

def get_libero_dummy_action():

|

||||

"""Get dummy/no-op action, used to roll out the simulation while the robot does nothing."""

|

||||

return [0, 0, 0, 0, 0, 0, -1]

|

||||

|

||||

|

||||

OBS_STATE_DIM = 8

|

||||

ACTION_DIM = 7

|

||||

|

||||

|

||||

class LiberoEnv(gym.Env):

|

||||

metadata = {"render_modes": ["rgb_array"], "render_fps": 80}

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

task_suite,

|

||||

task_id,

|

||||

task_suite_name,

|

||||

camera_name="agentview_image,robot0_eye_in_hand_image",

|

||||

obs_type="pixels",

|

||||

render_mode="rgb_array",

|

||||

observation_width=256,

|

||||

observation_height=256,

|

||||

visualization_width=640,

|

||||

visualization_height=480,

|

||||

init_states=True,

|

||||

episode_index=0,

|

||||

):

|

||||

super().__init__()

|

||||

self.task_id = task_id

|

||||

self.obs_type = obs_type

|

||||

self.render_mode = render_mode

|

||||

self.observation_width = observation_width

|

||||

self.observation_height = observation_height

|

||||

self.visualization_width = visualization_width

|

||||

self.visualization_height = visualization_height

|

||||

self.init_states = init_states

|

||||

self.camera_name = camera_name.split(

|

||||

","

|

||||

) # agentview_image (main) or robot0_eye_in_hand_image (wrist)

|

||||

|

||||

# Map raw camera names to "image1" and "image2".

|

||||

# The preprocessing step `preprocess_observation` will then prefix these with `.images.*`,

|

||||

# following the LeRobot convention (e.g., `observation.images.image`, `observation.images.image2`).

|

||||

# This ensures the policy consistently receives observations in the

|

||||

# expected format regardless of the original camera naming.

|

||||

self.camera_name_mapping = {

|

||||

"agentview_image": "image",

|

||||

"robot0_eye_in_hand_image": "image2",

|

||||

}

|

||||

|

||||

self.num_steps_wait = (

|

||||

10 # Do nothing for the first few timesteps to wait for the simulator drops objects

|

||||

)

|

||||

self.episode_index = episode_index

|

||||

|

||||

self._env = self._make_envs_task(task_suite, self.task_id)

|

||||

TASK_SUITE_MAX_STEPS: dict[str, int] = {

|

||||

"libero_spatial": 220, # longest training demo has 193 steps

|

||||

"libero_object": 280, # longest training demo has 254 steps

|

||||

"libero_goal": 300, # longest training demo has 270 steps

|

||||

"libero_10": 520, # longest training demo has 505 steps

|

||||

"libero_90": 400, # longest training demo has 373 steps

|

||||

}

|

||||

default_steps = 500

|

||||

self._max_episode_steps = TASK_SUITE_MAX_STEPS.get(task_suite_name, default_steps)

|

||||

|

||||

images = {}

|

||||

for cam in self.camera_name:

|

||||

images[self.camera_name_mapping[cam]] = spaces.Box(

|

||||

low=0,

|

||||

high=255,

|

||||

shape=(self.observation_height, self.observation_width, 3),

|

||||

dtype=np.uint8,

|

||||

)

|

||||

|

||||

if self.obs_type == "state":

|

||||

raise NotImplementedError(

|

||||

"The 'state' observation type is not supported in LiberoEnv. "

|

||||

"Please switch to an image-based obs_type (e.g. 'pixels', 'pixels_agent_pos')."

|

||||

)

|

||||

|

||||

elif self.obs_type == "pixels":

|

||||

self.observation_space = spaces.Dict(

|

||||

{

|

||||

"pixels": spaces.Dict(images),

|

||||

}

|

||||

)

|

||||

elif self.obs_type == "pixels_agent_pos":

|

||||

self.observation_space = spaces.Dict(

|

||||

{

|

||||

"pixels": spaces.Dict(images),

|

||||

"agent_pos": spaces.Box(

|

||||

low=-1000.0,

|

||||

high=1000.0,

|

||||

shape=(OBS_STATE_DIM,),

|

||||

dtype=np.float64,

|

||||

),

|

||||

}

|

||||

)

|

||||

|

||||

self.action_space = spaces.Box(low=-1, high=1, shape=(ACTION_DIM,), dtype=np.float32)

|

||||

|

||||

def render(self):

|

||||

raw_obs = self._env.env._get_observations()

|

||||

image = self._format_raw_obs(raw_obs)["pixels"]["image"]

|

||||

return image

|

||||

|

||||

def _make_envs_task(self, task_suite, task_id: int = 0):

|

||||

task = task_suite.get_task(task_id)

|

||||

self.task = task.name

|

||||

self.task_description = task.language

|

||||

task_bddl_file = os.path.join(get_libero_path("bddl_files"), task.problem_folder, task.bddl_file)

|

||||

|

||||

env_args = {

|

||||

"bddl_file_name": task_bddl_file,

|

||||

"camera_heights": self.observation_height,

|

||||

"camera_widths": self.observation_width,

|

||||

}

|

||||

env = OffScreenRenderEnv(**env_args)

|

||||

env.reset()

|

||||

if self.init_states:

|

||||

init_states = get_task_init_states(

|

||||

task_suite, task_id

|

||||

) # for benchmarking purpose, we fix the a set of initial states FIXME(mshukor): should be in the reset()?

|

||||

init_state_id = self.episode_index # episode index

|

||||

env.set_init_state(init_states[init_state_id])

|

||||

|

||||

return env

|

||||

|

||||

def _format_raw_obs(self, raw_obs):

|

||||

images = {}

|

||||

for camera_name in self.camera_name:

|

||||

image = raw_obs[camera_name]

|

||||

image = image[::-1, ::-1] # rotate 180 degrees

|

||||

images[self.camera_name_mapping[camera_name]] = image

|

||||

state = np.concatenate(

|

||||

(

|

||||

raw_obs["robot0_eef_pos"],

|

||||

quat2axisangle(raw_obs["robot0_eef_quat"]),

|

||||

raw_obs["robot0_gripper_qpos"],

|

||||

)

|

||||

)

|

||||

agent_pos = state

|

||||

if self.obs_type == "state":

|

||||

raise NotImplementedError(

|

||||

"The 'state' observation type is not supported in LiberoEnv. "

|

||||

"Please switch to an image-based obs_type (e.g. 'pixels', 'pixels_agent_pos')."

|

||||

)

|

||||

elif self.obs_type == "pixels":

|

||||

obs = {"pixels": images.copy()}

|

||||

elif self.obs_type == "pixels_agent_pos":

|

||||

obs = {

|

||||

"pixels": images.copy(),

|

||||

"agent_pos": agent_pos,

|

||||

}

|

||||

return obs

|

||||

|

||||

def reset(self, seed=None, **kwargs):

|

||||

super().reset(seed=seed)

|

||||

|

||||

self._env.seed(seed)

|

||||

raw_obs = self._env.reset()

|

||||

# Do nothing for the first few timesteps to wait for the simulator drops objects

|

||||

for _ in range(self.num_steps_wait):

|

||||

raw_obs, _, _, _ = self._env.step(get_libero_dummy_action())

|

||||

observation = self._format_raw_obs(raw_obs)

|

||||

info = {"is_success": False}

|

||||

return observation, info

|

||||

|

||||

def step(self, action):

|

||||

if action.ndim != 1:

|

||||

raise ValueError(

|

||||

f"Expected action to be 1-D (shape (action_dim,)), "

|

||||

f"but got shape {action.shape} with ndim={action.ndim}"

|

||||

)

|

||||

raw_obs, reward, done, info = self._env.step(action)

|

||||

|

||||

is_success = self._env.check_success()

|

||||

terminated = done or is_success

|

||||

info["is_success"] = done # is_success

|

||||

|

||||

observation = self._format_raw_obs(raw_obs)

|

||||

if done:

|

||||

self.reset()

|

||||

print(self.task, self.task_id, done, is_success)

|

||||

truncated = False

|

||||

return observation, reward, terminated, truncated, info

|

||||

|

||||

def close(self):

|

||||

self._env.close()

|

||||

|

||||

|

||||

def create_libero_envs1(

|

||||

task: str,

|

||||

n_envs: int,

|

||||

gym_kwargs: dict[str, Any] = None,

|

||||

camera_name: str = "agentview_image,robot0_eye_in_hand_image",

|

||||

init_states: bool = True,

|

||||

env_cls: Callable = None,

|

||||

multitask_eval: bool = True,

|

||||

) -> dict[str, dict[str, Any]]:

|

||||

"""

|

||||

Here n_envs is per task and equal to the number of rollouts.

|

||||

Returns:

|

||||

dict[str, dict[str, list[LiberoEnv]]]: keys are task_suite and values are list of LiberoEnv envs.

|

||||

"""

|

||||

print("num envs", n_envs)

|

||||

print("multitask_eval", multitask_eval)

|

||||

print("gym_kwargs", gym_kwargs)

|

||||

if gym_kwargs is None:

|

||||

gym_kwargs = {}

|

||||

|

||||

if not multitask_eval:

|

||||

benchmark_dict = benchmark.get_benchmark_dict()

|

||||

task_suite = benchmark_dict[task]() # can also choose libero_spatial, libero_object, libero_10 etc.

|

||||

tasks_id = list(range(len(task_suite.tasks)))

|

||||

episode_indices = [0 for i in range(len(tasks_id))]

|

||||

if len(tasks_id) == 1:

|

||||

tasks_id = [tasks_id[0] for _ in range(n_envs)]

|

||||

episode_indices = list(range(n_envs))

|

||||

elif len(tasks_id) < n_envs and n_envs % len(tasks_id) == 0:

|

||||

n_repeat = n_envs // len(tasks_id)

|

||||

print("n_repeat", n_repeat)

|

||||

episode_indices = []

|

||||

for _ in range(len(tasks_id)):

|

||||

episode_indices.extend(list(range(n_repeat)))

|

||||

tasks_id = list(chain.from_iterable([[item] * n_repeat for item in tasks_id]))

|

||||

elif n_envs < len(tasks_id):

|

||||

tasks_id = tasks_id[:n_envs]

|

||||

episode_indices = list(range(n_envs))[:n_envs]

|

||||

print(f"WARNING: n_envs < len(tasks_id), evaluating only on {tasks_id}")

|

||||

print(f"Creating Libero envs with task ids {tasks_id} from suite {task}")

|

||||

assert n_envs == len(tasks_id), (

|

||||

f"len(n_envs) and tasks_id should be the same, got {n_envs} and {len(tasks_id)}"

|

||||

)

|

||||

return env_cls(

|

||||

[

|

||||

lambda i=i: LiberoEnv(

|

||||

task_suite=task_suite,

|

||||

task_id=tasks_id[i],

|

||||

task_suite_name=task,

|

||||

camera_name=camera_name,

|

||||

init_states=init_states,

|

||||

episode_index=episode_indices[i],

|

||||

**gym_kwargs,

|

||||

)

|

||||

for i in range(n_envs)

|

||||

]

|

||||

)

|

||||

else:

|

||||

envs = defaultdict(dict)

|

||||

benchmark_dict = benchmark.get_benchmark_dict()

|

||||

task = task.split(",")

|

||||

for _task in task:

|

||||

task_suite = benchmark_dict[

|

||||

_task

|

||||

]() # can also choose libero_spatial, libero_object, libero_10 etc.

|

||||

tasks_ids = list(range(len(task_suite.tasks)))

|

||||

for tasks_id in tasks_ids:

|

||||

episode_indices = list(range(n_envs))

|

||||

print(

|

||||

f"Creating Libero envs with task ids {tasks_id} from suite {_task}, episode_indices: {episode_indices}"

|

||||

)

|

||||

envs_list = [

|

||||

(

|

||||

lambda i=i,

|

||||

task_suite=task_suite,

|

||||

tasks_id=tasks_id,

|

||||

_task=_task,

|

||||

episode_indices=episode_indices: LiberoEnv(

|

||||

task_suite=task_suite,

|

||||

task_id=tasks_id,

|

||||

task_suite_name=_task,

|

||||

camera_name=camera_name,

|

||||

init_states=init_states,

|

||||

episode_index=episode_indices[i],

|

||||

**gym_kwargs,

|

||||

)

|

||||

)

|

||||

for i in range(n_envs)

|

||||

]

|

||||

envs[_task][tasks_id] = env_cls(envs_list)

|

||||

return envs

|

||||

@@ -134,3 +134,49 @@ def add_envs_task(env: gym.vector.VectorEnv, observation: dict[str, Any]) -> dic

|

||||

num_envs = observation[list(observation.keys())[0]].shape[0]

|

||||

observation["task"] = ["" for _ in range(num_envs)]

|

||||

return observation

|

||||

|

||||

|

||||

def _close_single_env(env: Any) -> None:

|

||||

"""Try to close a single env object if it exposes .close()."""

|

||||

try:

|

||||

close_fn = getattr(env, "close", None)

|

||||

if callable(close_fn):

|

||||

close_fn()

|

||||

except Exception as exc:

|

||||

# Best-effort close: log but don't raise

|

||||

LOG.debug("Exception while closing env %s: %s", env, exc)

|

||||

|

||||

|

||||

def close_envs(env_or_collection: Any) -> None:

|

||||

"""

|

||||

Close a single env or any nested structure of envs.

|

||||

|

||||

Accepts:

|

||||

- a single env with .close()

|

||||

- a Mapping of things (e.g. dict)

|

||||

- a Sequence of things (list/tuple) but NOT str/bytes

|

||||

- nested combinations of the above

|

||||

|

||||

This is intentionally permissive and best-effort: it will swallow exceptions

|

||||

encountered while closing individual envs and continue.

|

||||

"""

|

||||

# Guard: single object with close()

|

||||

if hasattr(env_or_collection, "close") and not isinstance(env_or_collection, (Mapping, Sequence)):

|

||||

_close_single_env(env_or_collection)

|

||||

return

|

||||

|

||||

# Mapping (e.g., {suite: {task_id: vec_env}})

|

||||

if isinstance(env_or_collection, Mapping):

|

||||

for v in env_or_collection.values():

|

||||

close_envs(v)

|

||||

return

|

||||

|

||||

# Sequence (list/tuple) but skip str/bytes

|

||||

if isinstance(env_or_collection, Sequence) and not isinstance(env_or_collection, (str, bytes)):

|

||||

for v in env_or_collection:

|

||||

close_envs(v)

|

||||

return

|

||||

|

||||

# Fallback: try to close if possible

|

||||

if hasattr(env_or_collection, "close"):

|

||||

_close_single_env(env_or_collection)

|

||||

|

||||

@@ -27,7 +27,9 @@ from lerobot.envs.utils import env_to_policy_features

|

||||

from lerobot.policies.act.configuration_act import ACTConfig

|

||||

from lerobot.policies.diffusion.configuration_diffusion import DiffusionConfig

|

||||

from lerobot.policies.pi0.configuration_pi0 import PI0Config

|

||||

from lerobot.policies.pi0_openpi.configuration_pi0openpi import PI0OpenPIConfig

|

||||

from lerobot.policies.pi0fast.configuration_pi0fast import PI0FASTConfig

|

||||

from lerobot.policies.pi05_openpi.configuration_pi05openpi import PI05OpenPIConfig

|

||||

from lerobot.policies.pretrained import PreTrainedPolicy

|

||||

from lerobot.policies.sac.configuration_sac import SACConfig

|

||||

from lerobot.policies.sac.reward_model.configuration_classifier import RewardClassifierConfig

|

||||

@@ -62,6 +64,14 @@ def get_policy_class(name: str) -> PreTrainedPolicy:

|

||||

from lerobot.policies.pi0fast.modeling_pi0fast import PI0FASTPolicy

|

||||

|

||||

return PI0FASTPolicy

|

||||

elif name == "pi0_openpi":

|

||||

from lerobot.policies.pi0_openpi.modeling_pi0openpi import PI0OpenPIPolicy

|

||||

|

||||

return PI0OpenPIPolicy

|

||||

elif name == "pi05_openpi":

|

||||

from lerobot.policies.pi05_openpi.modeling_pi05openpi import PI05OpenPIPolicy

|

||||

|

||||

return PI05OpenPIPolicy

|

||||

elif name == "sac":

|

||||

from lerobot.policies.sac.modeling_sac import SACPolicy

|

||||

|

||||

@@ -91,6 +101,10 @@ def make_policy_config(policy_type: str, **kwargs) -> PreTrainedConfig:

|

||||

return PI0Config(**kwargs)

|

||||

elif policy_type == "pi0fast":

|

||||

return PI0FASTConfig(**kwargs)

|

||||

elif policy_type == "pi0_openpi":

|

||||

return PI0OpenPIConfig(**kwargs)

|

||||

elif policy_type == "pi05_openpi":

|

||||

return PI05OpenPIConfig(**kwargs)

|

||||

elif policy_type == "sac":

|

||||

return SACConfig(**kwargs)

|

||||

elif policy_type == "smolvla":

|

||||

@@ -172,7 +186,5 @@ def make_policy(

|

||||

|

||||

policy.to(cfg.device)

|

||||

assert isinstance(policy, nn.Module)

|

||||

|

||||

# policy = torch.compile(policy, mode="reduce-overhead")

|

||||

|

||||

return policy

|

||||

|

||||

@@ -0,0 +1,92 @@

|

||||

# π₀.₅ (pi05)

|

||||

|

||||

This repository contains the Hugging Face port of **π₀.₅**, adapted from [OpenPI](https://github.com/Physical-Intelligence/openpi) by the Physical Intelligence.

|

||||

It is designed as a **Vision-Language-Action model with open-world generalization**.

|

||||

|

||||

---

|

||||

|

||||

### ⚠️ WARNING ⚠️

|

||||

|

||||